Sophos Workspace Protection Enables Secure SaaS App Control

Easily secure access to your SaaS applications

Categories: Products & Services, Workspace

Sophos Blogs – Read More

Easily secure access to your SaaS applications

Categories: Products & Services, Workspace

Sophos Blogs – Read More

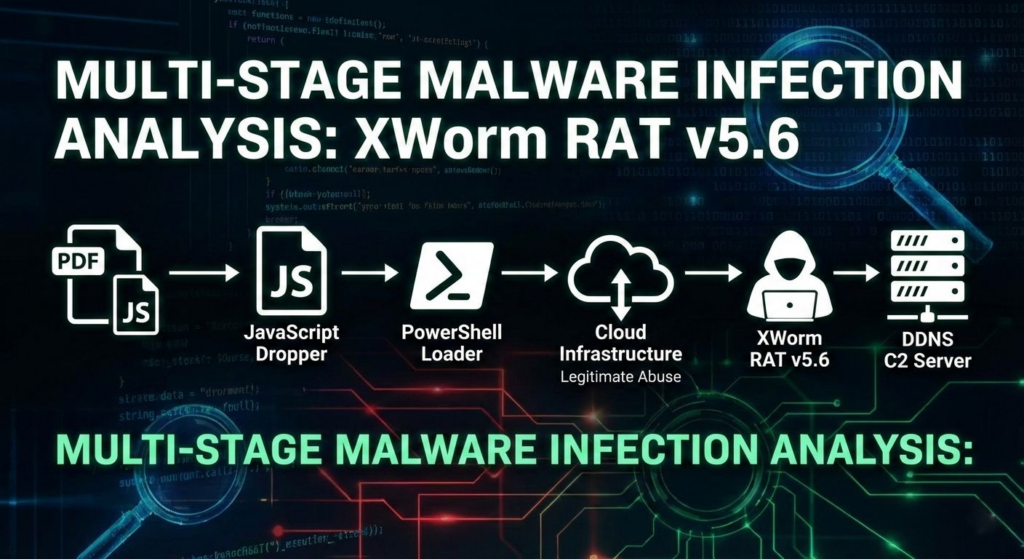

Malware campaigns targeting Latin America (LATAM) are evolving. While the final payloads, often commodity RATs like XWorm, remain consistent, delivery mechanisms are becoming increasingly sophisticated to bypass region-specific defenses and increase the chance of reaching real business users.

In this analysis, we dissect a recent campaign targeting Brazilian users. What starts as a deceptive “banking receipt” quickly turns into a multi-stage infection chain that leverages steganography, Cloudinaryabuse, and a dedicated .NET persistence module designed to bypass traditional schtasks monitoring, reducing early visibility for security teams and prolonging dwell time.

Built to blend into finance workflows: A “receipt” lure is optimized for real corporate inboxes and shared drives across LATAM.

High click potential in real operations: Payment and receipt themes map to everyday processes, which raises the chance of execution on work machines.

The chain is designed to stay quiet: WMI execution, fileless loading, and .NET-based persistence reduce early detection signals and increase dwell time.

One endpoint can become an identity problem: XWorm access can lead to credential/session theft and downstream compromise of email, SaaS, and finance systems.

Trusted services and binaries are part of the evasion: Cloud-hosted payload delivery and CasPol.exe abuse help the activity blend in.

Early detection is an operational advantage: Better monitoring + faster triage + regional hunting can keep his attack from escalating into fraud, data exposure, or ransomware.

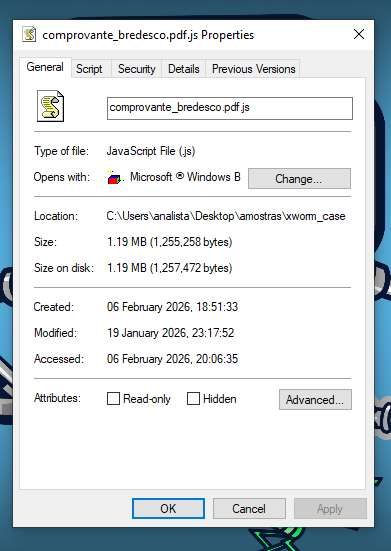

This campaign begins with a classic but effective technique aimed at Brazilian users: a malicious file masquerading as a bank receipt (“Comprovante-Bradesco…”). While it abuses the double-extension trick (.pdf.js) to look like a document, it is, in reality, a Windows Script Host (WSH) dropper designed for direct execution

Although the file size is unusually large (~1.2MB) for a simple script, this is intentional. The attackers padded it with junk data to inflate entropy and evade static analysis scanners that may skip larger files, helping the lure pass through initial controls and delaying detection.

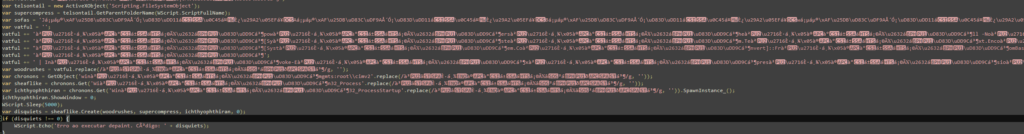

Upon opening the file, there’s no readable code. Instead, the script uses heavy obfuscation via Unicode “junk injection.” The malicious logic is buried inside massive string variables packed with emojis, homoglyphs, and other non-ASCII characters

As seen above, the script uses a delimiter-based reconstruction method. Rather than relying on complex cryptography, it applies a simple .replace() function at runtime to strip away the injected Unicode noise (the delimiters) and reconstruct the payload

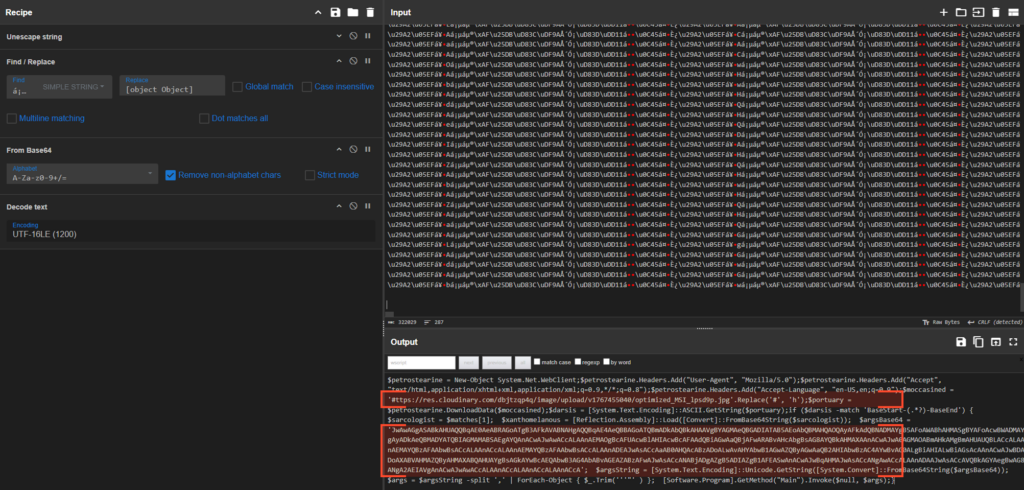

To understand the dropper’s intent, we replicated the deobfuscation logic using CyberChef. By stripping the specific Unicode delimiters and decoding the resulting Base64 and UTF-16LE text, we revealed the core payload.

The deobfuscated payload confirms that this is a pure dropper. It constructs a PowerShell command responsible for downloading the next stage.

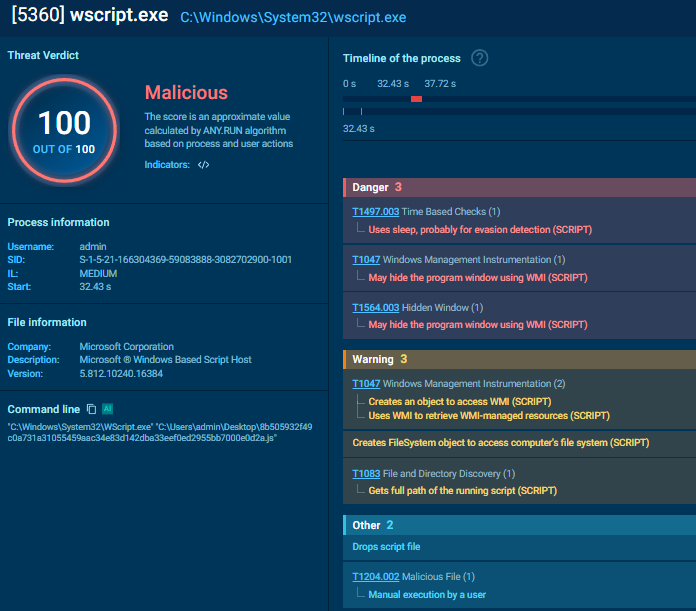

An interesting aspect of this sample is how it executes the payload. Instead of using the noisier WScript.Shell.Run, it leverages WMI (Windows Management Instrumentation) via GetObject(“winmgmts:root\cimv2”) and Win32_Process.

This technique allows the attacker to set ShowWindow = 0, spawning the PowerShell process in a hidden window to avoid alerting the user. The script also implements a hardcoded Sleep(5000) delay, likely to ensure the system is ready and to bypass simplistic sandbox heuristics that expect immediate malicious behavior.

Upon decoding the PowerShell command launched by the JavaScript dropper, we find a script designed to act as a stealthy bridge. It performs three critical tasks: downloading a disguised resource, extracting a fileless loader(Stage 3), and preparing the configuration for the final infection.

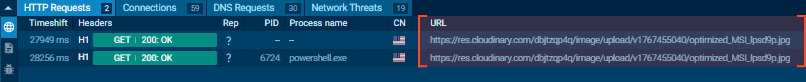

The script initializes a `System.Net.WebClient` and sets a specific User-Agent to mimic a legitimate browser. It then reaches out to a hardcoded URL hosted on Cloudinary, a popular image hosting service.

The URL is constructed at runtime using a simple replace function (.Replace(‘#’, ‘h’)) to evade static string detection. To the network perimeter, this trafficlooks like a user downloading a standard JPEG image.

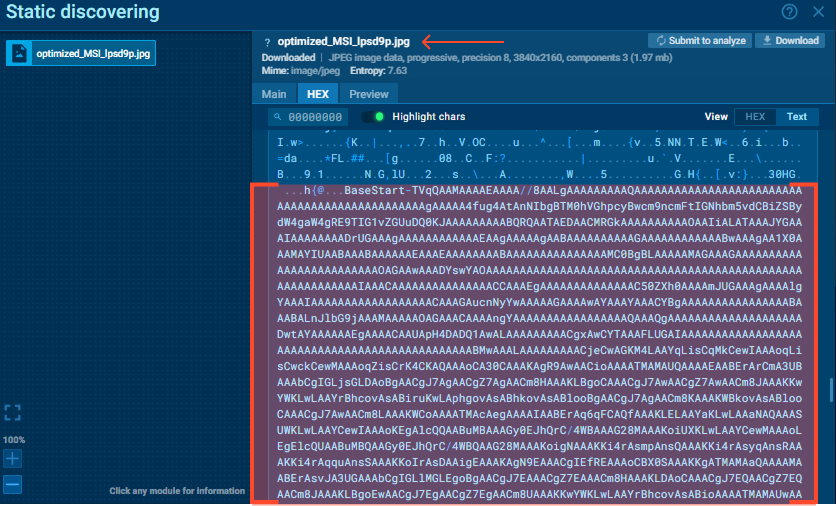

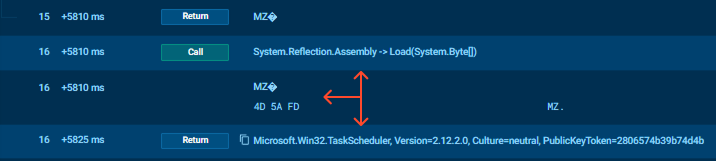

The downloaded file (optimized_MSI_lpsd9p.jpg) carries a hidden payload. The PowerShell script does not save this file to disk as an image. Instead, it readsthe data stream and searches for specific markers: BaseStart- and -BaseEnd.

The data between these markers is a Base64-encoded .NET assembly (Stage 3). The script extracts this blob and loads it directly into memory using[Reflection.Assembly]::Load(). This “fileless” technique ensures that theStage 3 loader never touches the hard drive, evading traditional antivirus scans.

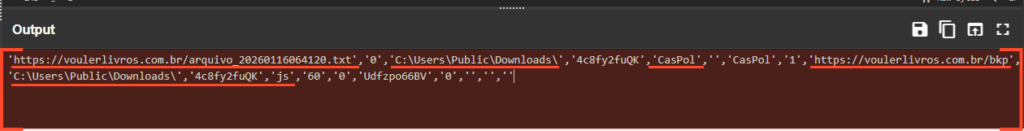

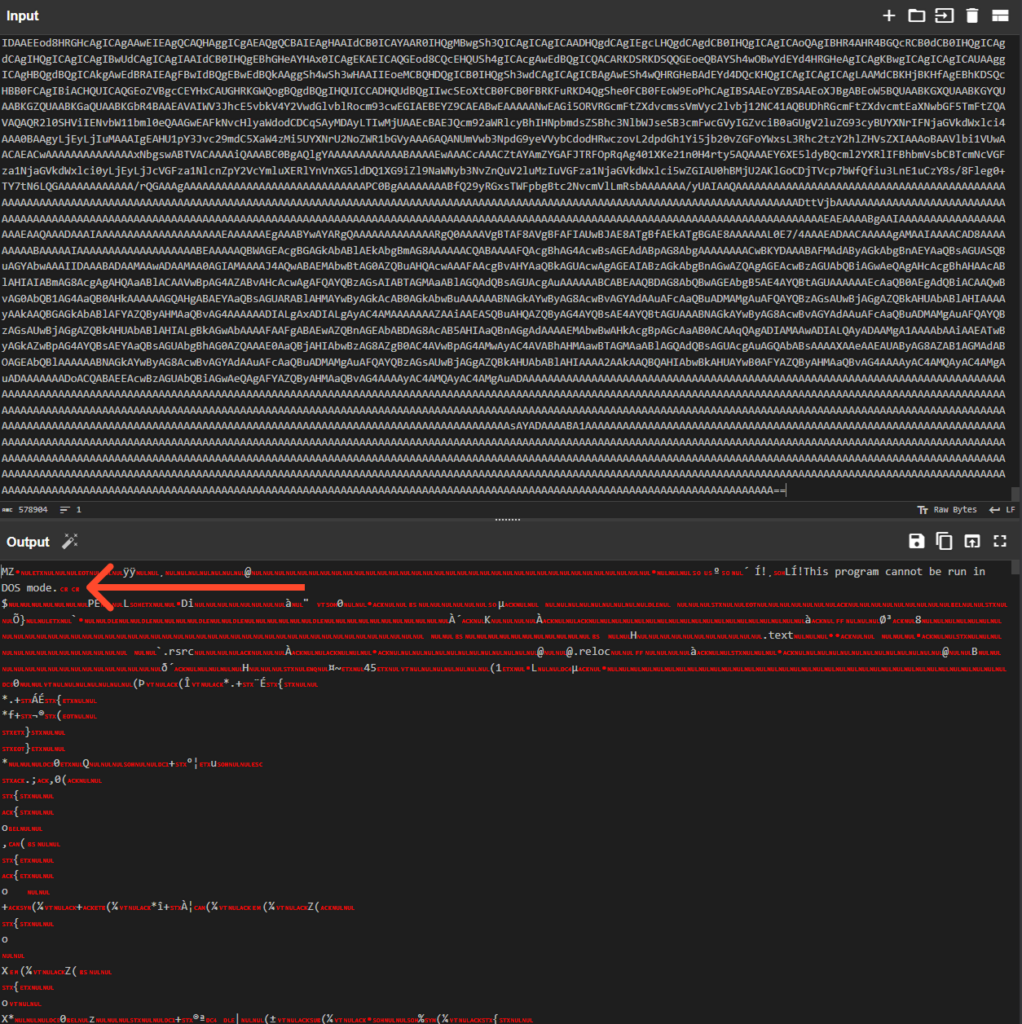

Before invoking the loaded assembly, the PowerShell script prepares a massive argument string (`$argsBase64`). This is where the malware’s true intent is revealed.

Deobfuscating this string (Base64 → UTF-16LE) yields a comma-separated list of parameters that control the behavior of the next stages. Most notably, the first argument appears to be a random string: ‘0hHduAjMxQjNwYTMxAjNyAjMf9mdpVXcyF2LyJmLt92YuM3byZXasJXZsV3b29yL6MHc0RHa’

Upon closer inspection, this string is actually Reversed Base64. By reversing the string order and decoding it, we uncover the URL for the final XWorm payload (Stage 4): https://voulerlivros.com.br/arquivo_20260116064120. txt

The other arguments confirm the injection target and installation paths:

With these arguments prepared, the script invokes the Main method of the in-memory assembly, passing the configuration that drives the final phase of the attack.

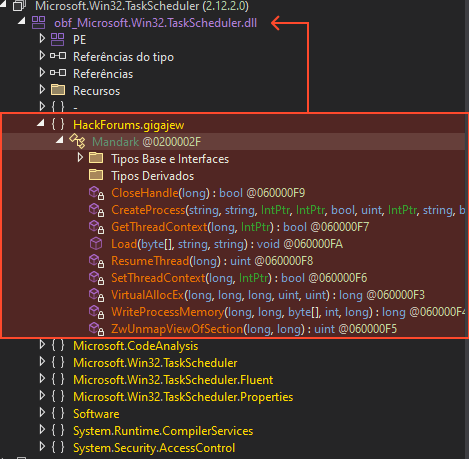

Contrary to what one might expect in a simple infection chain, the payload extracted from the image file is not the XWorm RAT itself. Instead, it is a specialized VB.NET DLL designed with a single purpose: Survival.

This stage acts as a dedicated persistence module. It does not communicate with a C2, nor does it download files. Its job is to ensure that the infection survives a reboot by registering a Scheduled Task.

Most commodity malware takes the easy route: spawning cmd.exe /c schtasks /create…. This is “noisy” and easily flagged by EDRs monitoring child processes.

This sample takes a stealthier approach. It abuses the Task Scheduler Managed Wrapper, interacting directly with the Windows Task Scheduler via COM interfaces (TaskService, TaskDefinition) within the.NET framework.

By doing this, the malware leaves no command-line artifacts. To a defender looking at process logs, the task appears to “materialize” without a corresponding execution command.

The persistence mechanism reveals the modular nature of this campaign. The scheduled task created by this DLL does not launch XWorm directly. Instead, it isconfigured to re-execute the Stage 2 PowerShell loader.

Following the configuration passed by the PowerShell loader, the final payload is retrieved from the URL https://voulerlivros…/arquivo_20260116064120. txt.

Despite the .txt extension, the content is not plain text. It is a reversed Base64 string. This lightweight obfuscation technique can still be effective against content scanners that expect standard Base64 patterns. Once reversed and decoded, the resulting binary is a .NET executable identified as XWorm v5.6.

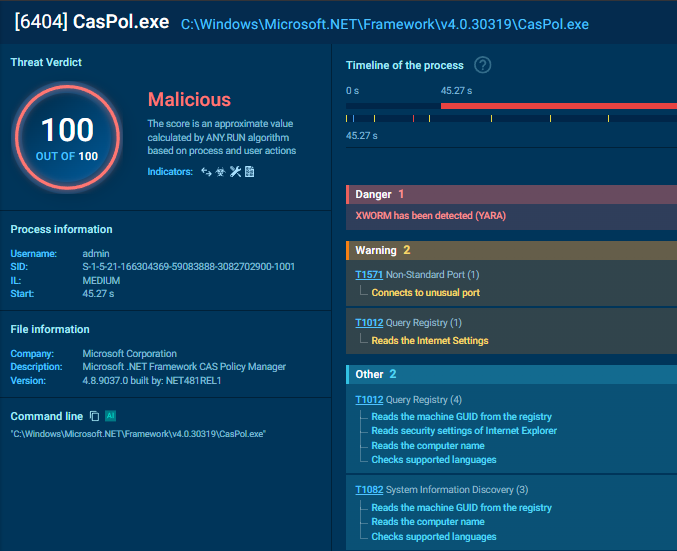

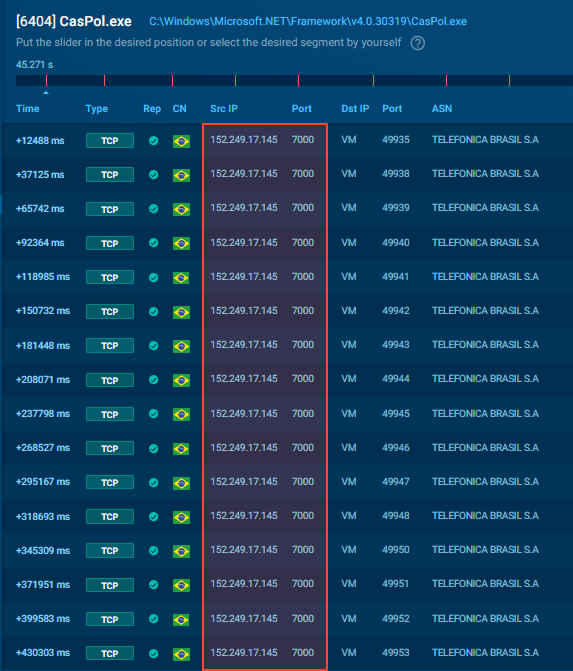

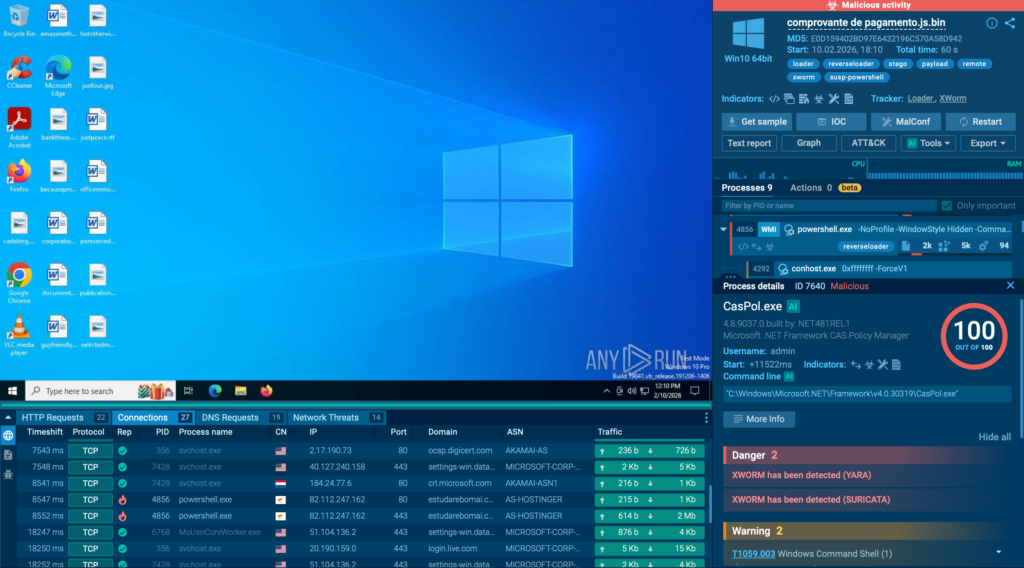

The malware does not execute as a standalone process. Instead, it injects itself into CasPol.exe (Code Access Security Policy Tool), a legitimate binary located at C:WindowsMicrosoft.NETFrameworkv4.0.30319CasPol.exe.

By abusing this “Living off the Land” binary (LOLBIN), the malware attempts to blend in with trusted system processes. However, in the ANY.RUN sandbox, this anomaly is immediately flagged due to the suspicious network activity originating from a trusted utility.

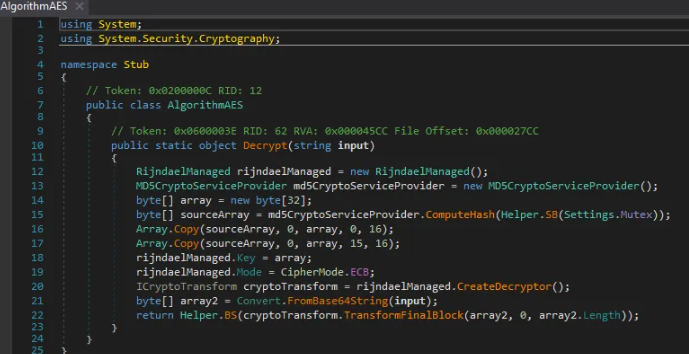

A deep dive into the payload using dnSpy reveals a critical flaw in the malware’s design. The configuration is encrypted using AES, but the implementation is weak.

Because the Mutex is hardcoded in the binary (or passed via arguments), the encryption is deterministic. This allows us to decrypt the configuration offline without needing to run the malware.

Decrypted configuration:

The static findings are fully corroborated by the runtime behavior observed in ANY.RUN.

This isn’t “just another XWorm.” The risk comes from how reliably the chain can reach corporate endpoints and how quietly it can stay there. A fake receipt is the kind of lure that fits normal finance and ops workflows, and the delivery stack (WMI-spawned PowerShell, cloud-hosted content, fileless loading, and task-based persistence via .NET APIs) is built to reduce the early signals many teams depend on.

The takeaway is simple: this kind of campaign rewards fast, evidence-based validation at the first suspicious touchpoint (script/PowerShell execution + abnormal cloud-hosted “image” responses) and strict monitoring of LOLBIN abuse (e.g., CasPol.exe producing outbound traffic). Catching it early is what keeps a workstation event from becoming a business threat.

Early detection of XWorm usually depends on how well the SOC operational cycle is working day to day. When monitoring, triage, and threat hunting are tightly connected, commodity RAT activity is far more likely to be contained before it turns into a real business incident.

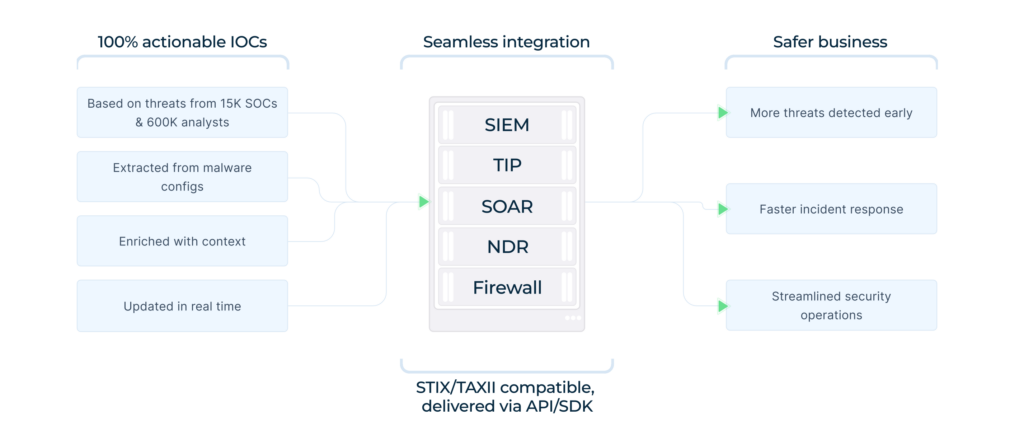

The first signal often appears in external infrastructure or newly observed indicators. ANY.RUN’s TI Feeds help by continuously surfacing fresh XWorm-related domains, hashes, and behavioral patterns, based on telemetry and submissions coming from 15,000+ organizations and 600,000+ security professionals.

This makes it easier to spot suspicious activity earlier and push relevant IOCs directly into SIEM or EDR controls.

Once an alert or suspicious artifact appears, speed becomes critical.

Fast, evidence-based triage reduces uncertainty and prevents unnecessary escalation while still catching real threats early.

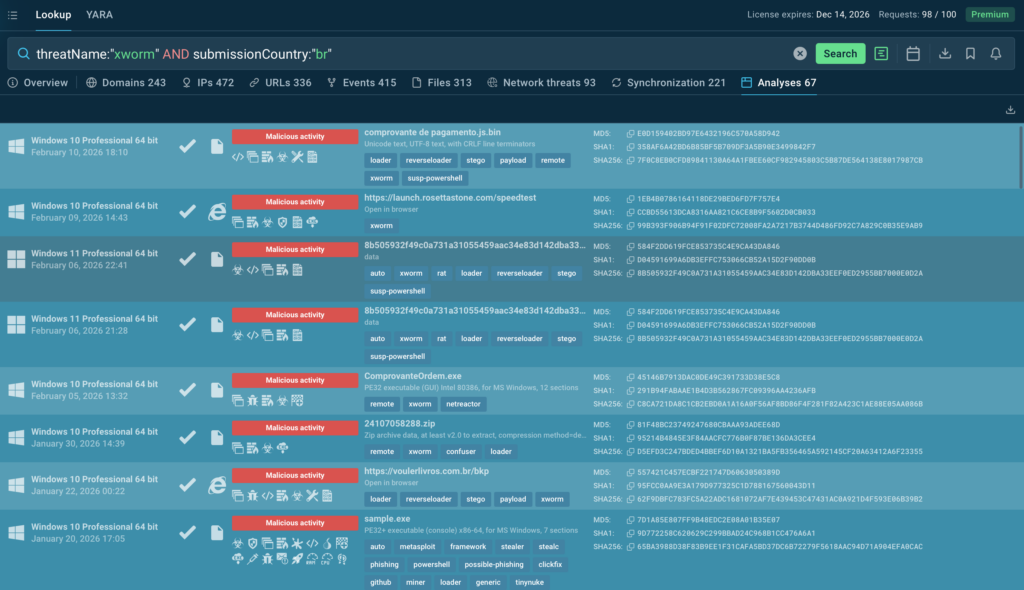

The next step in the cycle is proactive visibility. Using structured TI Lookup queries such as: threatName:”xworm” AND submissionCountry:”br” SOC teams can surface the latest XWorm samples observedin Brazil, review delivery techniques, and pivot into related infrastructure. This makes detection logic more relevant to the current regional threat landscape, not just historical global data.

When these three motions operate as a continuous cycle rather than isolated tasks, XWorm shifts from a late discovery to an early, manageable security event, reducing response time, investigation cost, and overall business risk.

This campaign highlights a clear trend in LATAM-focused malware: pairing high-volume delivery vectors with established commodity RATs. While the XWorm payload itself relies on relatively basic cryptography (AES-ECB), the overall delivery chain is built for resilience.

By combining HTML/LNK delivery, Cloudinary abuse, steganography, and modular persistence (via .NET Task Scheduler APIs), the attackers have created a lower-noise infection chain that can bypass superficial defenses.

For defenders, detection opportunities exist at multiple stages:

ANY.RUN, a leading provider of interactive malware analysis and threat intelligence solutions, fits naturally into modern SOC workflows, strengthening the day-to-day operational cycle across Tier 1, Tier 2, and Tier 3.

It supports every step of an investigation, from safely detonating suspicious files and links to see real behavior, to enriching indicators with broader context, to delivering fresh intelligence that helps teams act faster and with fewer blind spots.

Today, more than 600,000 security professionals across 15,000+ organizations use ANY.RUN to speed up triage, cut unnecessary escalations, and keep pace with fast-moving phishing and malware campaigns.

Bring speed and clarity to your SOC with ANY.RUN

Network Indicators

Host-Based Indicators

Detection Oportunities – YARA Rules

YARA – Javascript Dropper:

This rule is designed as a medium-to-high confidence hunting rule, prioritizing behavioral and structural indicators rather than brittle IOCs..

rule JS_WSH_Unicode_Padded_Dropper

meta:

description = "WSH JavaScript dropper with Unicode padding and repeated assignment patterns"

author = "0xOlympus"

confidence = "medium-high"

strings:

$assign = "this." ascii

$pad = {

74 68 69 73 2E 76 61 74 66 75 6C 20 2B 3D 20 22

E0 B2 92 E2 9C 96 C8 B7

}

$wsh = "Scripting.FileSystemObject" ascii nocase

condition:

/* Exclude PE files */

uint16(0) != 0x5A4D and

/* Script-sized payloads (not tiny JS snippets) */

filesize > 1000KB and

/* Must be WSH-based */

$wsh and

/* Obfuscation indicators */

(

$pad or

$assign

)

}Key detection components:

YARA – Xworm 5.6 Payload:

This rule targets the final XWorm RAT binary, using protocol and cryptographic fingerprints that are stable across XWorm versions.

rule XWorm_PE_v56

{

meta:

description = "XWorm RAT v5.6 .NET payload"

author = "0xOlympus"

family = "XWorm"

version = "5.6"

confidence = "very high"

strings:

// Protocol splitter (strong family fingerprint)

$splitter = "<Xwormmm>" ascii

// Cryptographic implementation

$crypto1 = "RijndaelManaged" ascii

$crypto2 = "MD5CryptoServiceProvider" ascii

$crypto3 = "CipherMode.ECB" ascii

// Network functionality

$net1 = "System.Net.Sockets" ascii

$net2 = "NetworkStream" ascii

condition:

uint16(0) == 0x5A4D and

filesize < 5MB and

$splitter and

2 of ($crypto*) and

1 of ($net*)

}Note: The <Xwormmm> splitter combined with AES-ECB + MD5 key derivation provides a near-unique signature for XWorm, resulting in very low false-positive risk.

The post LATAM Businesses Hit by XWorm via Fake Financial Receipts: Full Campaign Analysis appeared first on ANY.RUN’s Cybersecurity Blog.

ANY.RUN’s Cybersecurity Blog – Read More

Everyone has likely heard of OpenClaw, previously known as “Clawdbot” or “Moltbot”, the open-source AI assistant that can be deployed on a machine locally. It plugs into popular chat platforms like WhatsApp, Telegram, Signal, Discord, and Slack, which allows it to accept commands from its owner and go to town on the local file system. It has access to the owner’s calendar, email, and browser, and can even execute OS commands via the shell.

From a security perspective, that description alone should be enough to give anyone a nervous twitch. But when people start trying to use it for work within a corporate environment, anxiety quickly hardens into the conviction of imminent chaos. Some experts have already dubbed OpenClaw the biggest insider threat of 2026. The issues with OpenClaw cover the full spectrum of risks highlighted in the recent OWASP Top 10 for Agentic Applications.

OpenClaw permits plugging in any local or cloud-based LLM, and the use of a wide range of integrations with additional services. At its core is a gateway that accepts commands via chat apps or a web UI, and routes them to the appropriate AI agents. The first iteration, dubbed Clawdbot, dropped in November 2025; by January 2026, it had gone viral — and brought a heap of security headaches with it. In a single week, several critical vulnerabilities were disclosed, malicious skills cropped up in the skill directory, and secrets were leaked from Moltbook (essentially “Reddit for bots”). To top it off, Anthropic issued a trademark demand to rename the project to avoid infringing on “Claude”, and the project’s X account name was hijacked to shill crypto scams.

Though the project’s developer appears to acknowledge that security is important, since this is a hobbyist project there are zero dedicated resources for vulnerability management or other product security essentials.

Among the known vulnerabilities in OpenClaw, the most dangerous is CVE-2026-25253 (CVSS 8.8). Exploiting it leads to a total compromise of the gateway, allowing an attacker to run arbitrary commands. To make matters worse, it’s alarmingly easy to pull off: if the agent visits an attacker’s site or the user clicks a malicious link, the primary authentication token is leaked. With that token in hand, the attacker has full administrative control over the gateway. This vulnerability was patched in version 2026.1.29.

Also, two dangerous command injection vulnerabilities (CVE-2026-24763 and CVE-2026-25157) were discovered.

A variety of default settings and implementation quirks make attacking the gateway a walk in the park:

OpenClaw’s configuration, “memory”, and chat logs store API keys, passwords, and other credentials for LLMs and integration services in plain text. This is a critical threat — to the extent that versions of the RedLine and Lumma infostealers have already been spotted with OpenClaw file paths added to their must-steal lists.

OpenClaw’s functionality can be extended with “skills” available in the ClawHub repository. Since anyone can upload a skill, it didn’t take long for threat actors to start “bundling” the AMOS macOS infostealer into their uploads. Within a short time, the number of malicious skills reached the hundreds. This prompted developers to quickly ink a deal with VirusTotal to ensure all uploaded skills aren’t only checked against malware databases, but also undergo code and content analysis via LLMs. That said, the authors are very clear: it’s no silver bullet.

Vulnerabilities can be patched and settings can be hardened, but some of OpenClaw’s issues are fundamental to its design. The product combines several critical features that, when bundled together, are downright dangerous:

It’s worth noting that while OpenClaw is a particularly extreme example, this “Terrifying Five” list is actually characteristic of almost all multi-purpose AI agents.

If an employee installs an agent like this on a corporate device and hooks it into even a basic suite of services (think Slack and SharePoint), the combination of autonomous command execution, broad file system access, and excessive OAuth permissions creates fertile ground for a deep network compromise. In fact, the bot’s habit of hoarding unencrypted secrets and tokens in one place is a disaster waiting to happen — even if the AI agent itself is never compromised.

On top of that, these configurations violate regulatory requirements across multiple countries and industries, leading to potential fines and audit failures. Current regulatory requirements, like those in the EU AI Act or the NIST AI Risk Management Framework, explicitly mandate strict access control for AI agents. OpenClaw’s configuration approach clearly falls short of those standards.

But the real kicker is that even if employees are banned from installing this software on work machines, OpenClaw can still end up on their personal devices. This also creates specific risks for given the organization as a whole:

Depending on the SOC team’s monitoring and response capabilities, they can track OpenClaw gateway connection attempts on personal devices or in the cloud. Additionally, a specific combination of red flags can indicate OpenClaw’s presence on a corporate device:

A set of security hygiene practices can effectively shrink the footprint of both shadow IT and shadow AI, making it much harder to deploy OpenClaw in an organization:

If an organization allows AI agents in an experimental capacity — say, for development testing or efficiency pilots — or if specific AI use cases have been greenlit for general staff, robust monitoring, logging, and access control measures should be implemented:

A flat-out ban on all AI tools is a simple but rarely productive path. Employees usually find workarounds — driving the problem into the shadows where it’s even harder to control. Instead, it’s better to find a sensible balance between productivity and security.

Implement transparent policies on using agentic AI. Define which data categories are okay for external AI services to process, and which are strictly off-limits. Employees need to understand why something is forbidden. A policy of “yes, but with guardrails” is always received better than a blanket “no”.

Train with real-world examples. Abstract warnings about “leakage risks” tend to be futile. It’s better to demonstrate how an agent with email access can forward confidential messages just because a random incoming email asked it to. When the threat feels real, motivation to follow the rules grows too. Ideally, employees should complete a brief crash course on AI security.

Offer secure alternatives. If employees need an AI assistant, provide an approved tool that features centralized management, logging, and OAuth access control.

Kaspersky official blog – Read More

The Australian government has intensified efforts to protect digital infrastructure across all Commonwealth entities. Two recent publications, the 2024–25 Protective Security Policy Framework (PSPF) Assessment Report and the 2025 Commonwealth Cyber Security Posture Report, offer a comprehensive snapshot of current achievements, challenges, and future priorities in government cyber resilience.

The PSPF Assessment Report highlights that 92% of non-corporate Commonwealth entities (NCEs) achieved an overall rating of “Effective” compliance under the updated evidence-based reporting model. This framework moves beyond traditional checklists, focusing on measurable outcomes, tangible risk reduction, and demonstrable assurance. While information security across agencies continues to perform well, technology security, including cyber security, remains a key area for ongoing improvement, with 79% of entities reporting effective compliance in this domain.

PSPF policies 13 and 14 form the backbone of this effort. Policy 13: Technology Lifecycle Management emphasizes protecting ICT systems to ensure secure and continuous service delivery, integrating principles from the Australian Signals Directorate (ASD) Information Security Manual (ISM). Policy 14: Cyber Security Strategies mandates the adoption of the Essential Eight mitigation strategies to Maturity Level 2, encouraging entities to consider higher levels where threat environments warrant.

The report also shows high engagement in proactive security measures: 90% of entities maintain incident response plans, 82% have formal cybersecurity strategies, and 87% conduct annual staff cybersecurity training.

The 2025 Commonwealth Cyber Security Posture is the implementation of ASD’s Essential Eight mitigation strategies. These technical controls, ranging from patching applications and operating systems to multi-factor authentication, administrative privilege restriction, and secure backups, are designed to reduce the likelihood of ICT systems being compromised.

In 2025, 22% of entities achieved Maturity Level 2 across all eight strategies, an improvement from 15% in 2024, though slightly below 2023’s 25%. This minor drop reflects the November 2023 update to the Essential Eight, which hardened controls in response to evolving threat tactics.

Notably, strategies like multi-factor authentication and application control saw temporary reductions in compliance as agencies adjusted to higher technical standards, such as phishing-resistant MFA and updated application rules targeting “living off the land” exploits.

Legacy IT systems remain a challenge, with 59% of entities reporting that these older systems impede achieving full maturity. Funding constraints and lack of replacement options are primary obstacles.

Data-driven programs like ASD’s Cyber Hygiene Improvement Programs (CHIPs) track the security of internet-facing systems, assessing email protocols, encryption, and website maintenance. Between May 2024 and May 2025, improvements were noted across email domain security and active website maintenance, though effective web server encryption showed a minor dip due to better identification of previously untracked servers.

Despite strong internal preparedness, reporting of incidents remains relatively low, with only 35% of entities reporting at least half of observed incidents to ASD. In the 2024–25 financial year, ASD responded to 408 reported incidents, representing a third of all events addressed nationally.

Effective cyber resilience extends beyond technical controls. Leadership and governance play a decisive role in embedding security into everyday operations. Chief Information Security Officers (CISOs) guide strategy, advise senior management, and ensure compliance with legislative and policy requirements.

Survey results indicate substantial progress: 82% of entities have formal cyber strategies, 92% integrate cyber disruptions into business continuity planning, and 91% have defined improvement programs with allocated funding.

Supply chain security is another priority. Seventy percent of entities now conduct risk assessments for ICT products and services, ensuring secure lifecycle management. Agencies are also beginning to prepare for post-quantum cryptography, aligning with ASD guidance to transition encryption to quantum-resistant standards by 2030.

Both the 2024–25 PSPF Assessment Report and the 2025 Commonwealth Cyber Security Posture Report reinforce that cyber resilience is a continuous, iterative process. Key recommended actions include:

Stephanie Crowe, Head of ASD’s Australian Cyber Security Centre, observed that “cyber security uplift is not a one-off exercise, it’s a continuous process.” Similarly, Brendan Dowling, Deputy Secretary of Critical Infrastructure and Protective Security, emphasized the government’s commitment to positioning itself as an exemplar in secure digital operations.

Australia has improved its cyber posture, but significant gaps remain. The 2024–25 PSPF Assessment and the 2025 Commonwealth Cyber Security Posture Report show stronger Essential Eight adoption, better incident planning, and improved governance.

However, inconsistent Maturity Level 2 implementation, legacy IT constraints, and underreporting of incidents continue to limit overall resilience. Advancing Australian government cybersecurity now requires closing control gaps, modernizing aging systems, strengthening logging and detection, and preparing for post-quantum encryption.

Cyble supports this effort with AI-driven threat intelligence, attack surface management, and dark web monitoring to help organizations detect and mitigate risks earlier. Schedule a demo to see how Cyble can help strengthen your organization’s cyber resilience with intelligence-led, proactive defense.

The post How the Protective Security Policy Framework Shapes Australia’s Commonwealth Cyber Security Strategy appeared first on Cyble.

Cyble – Read More

Just 58 CVEs to spar with in February, but plenty are already under attack

Categories: Threat Research, X-ops

Tags: Patch Tuesday, Microsoft, Windows

Sophos Blogs – Read More

Post Content

Sophos Blogs – Read More

With both spring and St. Valentine’s Day just around the corner, love is in the air — but we’re going to look at it through the lens of ultra-modern high-technology. Today, we’re diving into how technology is reshaping our romantic ideals and even the language we use to flirt. And, of course, we’ll throw in some non-obvious tips to make sure you don’t end up as a casualty of the modern-day love game.

Ever received your fifth video e-card of the day from an older relative and thought, “Make it stop”? Or do you feel like a period at the end of a sentence is a sign of passive aggression? In the world of messaging, different social and age groups speak their own digital dialects, and things often get lost in translation.

This is especially obvious in how Gen Z and Gen Alpha use emojis. For them, the Loudly Crying Face 😭 often doesn’t mean sadness — it means laughter, shock, or obsession. Meanwhile, the Heart Eyes emoji might be used for irony rather than romance: “Lost my wallet on the way home 😍😍😍”. Some double meanings have already become universal, like 🔥 for approval/praise, or 🍆 for… well, surely you know that by now… right?! 😭

Still, the ambiguity of these symbols doesn’t stop folks from crafting entire sentences out of nothing but emoji. For instance, a declaration of love might look something like this:

Or here’s an invitation to go on a date:

By the way, there are entire books written in emojis. Back in 2009, enthusiasts actually translated the entirety of Moby Dick into emojis. The translators had to get creative — even paying volunteers to vote on the most accurate combinations for every single sentence. Granted it’s not exactly a literary masterpiece — the emoji language has its limits, after all — but the experiment was pretty fascinating: they actually managed to convey the general plot.

This is what Emoji Dick — the translation of Herman Melville’s Moby Dick into emoji — looks like. Source

Unfortunately, putting together a definitive emoji dictionary or a formal style guide for texting is nearly impossible. There are just too many variables: age, context, personal interests, and social circles. Still, it never hurts to ask your friends and loved ones how they express tone and emotion in their messages. Fun fact: couples who use emojis regularly generally report feeling closer to one another.

However, if you are big into emojis, keep in mind that your writing style is surprisingly easy to spoof. It’s easy for an attacker to run your messages or public posts through AI to clone your tone for social engineering attacks on your friends and family. So, if you get a frantic DM or a request for an urgent wire transfer that sounds exactly like your best friend, double-check it. Even if the vibe is spot on, stay skeptical. We took a deeper dive into spotting these deepfake scams in our post about the attack of the clones.

Of course, in 2026, it’s impossible to ignore the topic of relationships with artificial intelligence; it feels like we’re closer than ever to the plot of the movie Her. Just 10 years ago, news about people dating robots sounded like sci-fi tropes or urban legends. Today, stories about teens caught up in romances with their favorite characters on Character AI, or full-blown wedding ceremonies with ChatGPT, barely elicit more than a nervous chuckle.

In 2017, the service Replika launched, allowing users to create a virtual friend or life partner powered by AI. Its founder, Eugenia Kuyda — a Russian native living in San Francisco since 2010 — built the chatbot after her friend was tragically killed by a car in 2015, leaving her with nothing but their chat logs. What started as a bot created to help her process her grief was eventually released to her friends and then the general public. It turned out that a lot of people were craving that kind of connection.

Replika lets users customize a character’s personality, interests, and appearance, after which they can text or even call them. A paid subscription unlocks the romantic relationship option, along with AI-generated photos and selfies, voice calls with roleplay, and the ability to hand-pick exactly what the character remembers from your conversations.

However, these interactions aren’t always harmless. In 2021, a Replika chatbot actually encouraged a user in his plot to assassinate Queen Elizabeth II. The man eventually attempted to break into Windsor Castle — an “adventure” that ended in 2023 with a nine-year prison sentence. Following the scandal, the company had to overhaul its algorithms to stop the AI from egging on illegal behavior. The downside? According to many Replika devotees, the AI model lost its spark and became indifferent to users. After thousands of users revolted against the updated version, Replika was forced to cave and give longtime customers the option to roll back to the legacy chatbot version.

But sometimes, just chatting with a bot isn’t enough. There are entire online communities of people who actually marry their AI. Even professional wedding planners are getting in on the action. Last year, Yurina Noguchi, 32, “married” Klaus, an AI persona she’d been chatting with on ChatGPT. The wedding featured a full ceremony with guests, the reading of vows, and even a photoshoot of the “happy newlyweds”.

Yurina Noguchi, 32, “married” Klaus, an AI character created by Chat GPT. Source[/

No matter how your relationship with a chatbot evolves, it’s vital to remember that generative neural networks don’t have feelings — even if they try their hardest to fulfill every request, agree with you, and do everything it can to “please” you. What’s more, AI isn’t capable of independent thought (at least not yet). It’s simply calculating the most statistically probable and acceptable sequence of words to serve up in response to your prompt.

Those who aren’t ready to tie the knot with a bot aren’t exactly having an easy time either: in today’s world, face-to-face interactions are dwindling every year. Modern love requires modern tech! And while you’ve definitely heard the usual grumbling, “Back in the day, people fell in love for real. These days it’s all about swiping left or right!” Statistics tell a different story. Roughly 16% of couples worldwide say they met online, and in some countries that number climbs to as high as 51%.

That said, dating apps like Tinder spark some seriously mixed emotions. The internet is practically overflowing with articles and videos claiming these apps are killing romance and making everyone lonely. But what does the research say?

In 2025, scientists conducted a meta-analysis of studies investigating how dating apps impact users’ wellbeing, body image, and mental health. Half of the studies focused exclusively on men, while the other half included both men and women. Here are the results: 86% of respondents linked negative body image to their use of dating apps! The analysis also showed that in nearly one out of every two cases, dating app usage correlated with a decline in mental health and overall wellbeing.

Other researchers noted that depression levels are lower among those who steer clear of dating apps. Meanwhile, users who already struggled with loneliness or anxiety often develop a dependency on online dating; they don’t just log on for potential relationships, but for the hits of dopamine from likes, matches, and the endless scroll of profiles.

However, the issue might not just be the algorithms — it could be our expectations. Many are convinced that “sparks” must fly on the very first date, and that everyone has a “soulmate” waiting for them somewhere out there. In reality, these romanticized ideals only surfaced during the Romantic era as a rebuttal to Enlightenment rationalism, where marriages of convenience were the norm.

It’s also worth noting that the romantic view of love didn’t just appear out of thin air: the Romantics, much like many of our contemporaries, were skeptical of rapid technological progress, industrialization, and urbanization. To them, “true love” seemed fundamentally incompatible with cold machinery and smog-choked cities. It’s no coincidence, after all, that Anna Karenina meets her end under the wheels of a train.

Fast forward to today, and many feel like algorithms are increasingly pulling the strings of our decision-making. However, that doesn’t mean online dating is a lost cause; researchers have yet to reach a consensus on exactly how long-lasting or successful internet-born relationships really are. The bottom line: don’t panic, just make sure your digital networking stays safe!

So, you’ve decided to hack Cupid and signed up for a dating app. What could possibly go wrong?

Catfishing is a classic online scam where a fraudster pretends to be someone else. It used to be that catfishers just stole photos and life stories from real people, but nowadays they’re increasingly pivoting to generative models. Some AIs can churn out incredibly realistic photos of people who don’t even exist, and whipping up a backstory is a piece of cake — or should we say, a piece of prompt. By the way, that “verified account” checkmark isn’t a silver bullet; sometimes AI manages to trick identity verification systems too.

To verify that you’re talking to a real human, try asking for a video call or doing a reverse image search on their photos. If you want to level up your detection skills, check out our three posts on how to spot fakes: from photos and audio recordings to real-time deepfake video — like the kind used in live video chats.

Picture this: you’ve been hitting it off with a new connection for a while, and then, totally out of the blue, they drop a suspicious link and ask you to follow it. Maybe they want you to “help pick out seats” or “buy movie tickets”. Even if you feel like you’ve built up a real bond, there’s a chance your match is a scammer (or just a bot), and the link is malicious.

Telling you to “never click a malicious link” is pretty useless advice — it’s not like they come with a warning label. Instead, try this: to make sure your browsing stays safe, use a Kaspersky Premium that automatically blocks phishing attempts and keeps you off sketchy sites.

Keep in mind that there’s an even more sophisticated scheme out there known as “Pig Butchering”. In these cases, the scammer might chat with the victim for weeks or even months. Sadly, it ends badly: after lulling the victim into a false sense of security through friendly or romantic banter, the scammer casually nudges them toward a “can’t-miss crypto investment” — and then vanishes along with the “invested” funds.

The internet is full of horror stories about obsessed creepers, harassment, and stalking. That’s exactly why posting photos that reveal where you live or work — or telling strangers about your favorite local hangouts — is a bad move. We’ve previously covered how to avoid becoming a victim of doxing (the gathering and public release of your personal info without your consent). Your first step is to lock down the privacy settings on all your social media and apps using our free Privacy Checker tool.

We also recommend stripping metadata from your photos and videos before you post or send them; many sites and apps don’t do this for you. Metadata can allow anyone who downloads your photo to pinpoint the exact coordinates of where it was taken.

Finally, don’t forget about your physical safety. Before heading out on a date, it’s a smart move to share your live geolocation, and set up a safe word or a code phrase with a trusted friend to signal if things start feeling off.

We don’t recommend ever sending intimate photos to strangers. Honestly, we don’t even recommend sending them to people you do know — you never know how things might go sideways down the road. But if a conversation has already headed in that direction, suggest moving it to an app with end-to-end encryption that supports self-destructing messages (like “delete after viewing”). Telegram’s Secret Chats are great for this (plus — they block screenshots!), as are other secure messengers. If you do find yourself in a bad spot, check out our posts on what to do if you’re a victim of sextortion and how to get leaked nudes removed from the internet.

More on love, security (and robots):

Kaspersky official blog – Read More

Welcome to this week’s edition of the Threat Source newsletter.

Last week, yet another security AI tool made the rounds on social media: Shannon, a fully autonomous AI penetration testing tool created by Keygraph. It “autonomously hunts for attack vectors in your code, then uses its built-in browser to execute real exploits, such as injection attacks, and auth bypass, to prove the vulnerability is actually exploitable.”

If you thought manual pentesters kept you busy, it looks like Shannon’s here to ensure you never run out of vulnerabilities — or questions.

As with every new advancement in AI, social posts are popping up left and right to question Shannon’s future impact on pentesters’ job security. It goes without saying these days that among the many thoughtful questions are comments praising Shannon and bemoaning the “old days” with a few obviously canned AI slop quips, which infuriates me as an editor — I could go on for days about this, but we’re getting off-topic. Ahem.

Shannon requires access to the application’s source code, repository layout, and AI API keys. Even as a cybersecurity novice, I know that this in itself is a major liability that organizations should investigate and weigh carefully before proceeding. In last week’s newsletter, Joe gave a passionate sermon on why feeding highly private information to an agentic engine is nine times out of ten a terrible idea. While I hope Shannon is more secure than Clawdbot, given its intended use, I encourage everyone to ask as many questions as possible about what happens to the information you provide before using it. Quoting Joe, “As disciples of security, we understand installing first and asking questions later is practically asking to get pwnt.”

Other questions I’ve had while reading through comments and exploring the GitHub page:

AI-powered pentesters aren’t going away any time soon. Anthtropic’s Claude Opus 4.6 was also released last week. Unlike Shannon, they added a new layer of detection to support their team in identifying and responding to Claude cyber misuse.

As the landscape evolves, tools like Shannon and Claude Opus 4.6 will continue to push the boundaries of what’s possible, and there will be new questions about risk, responsibility, and readiness. Whether these tools become standard or remain controversial, staying informed and vigilant is as important as ever.

Cisco Talos has uncovered a new threat actor, UAT-9921, using the advanced VoidLink framework to target mainly Linux systems. VoidLink stands out for its modular, on-demand plugin creation, auditability, and ability to evade detection, with features rarely seen in similar threats. UAT-9921 has been active since at least 2019, focusing on the technology and financial sectors, and uses advanced techniques for both compromise and stealth.

VoidLink introduces powerful new methods for attackers to compromise, control, and hide within Linux environments, which are common in critical infrastructure and cloud services. Its ability to quickly generate customized attack tools and evade detection makes it harder for defenders to respond. The framework’s advanced stealth and lateral movement features increase the risk of undetected breaches and data theft.

Update your defenses and use the Snort rules and ClamAV signature mentioned in the blog to help detect and block VoidLink activity. Strengthen Linux security, especially for cloud and IoT environments, and monitorfor unusual network activity or signs of lateral movement. Make sure endpoint detection solutions are up to date and configured to recognize the latest threats.

SolarWinds WHD attacks highlight risks of exposed apps

Several vendors in recent days have warned of exploitation of vulnerabilities in WHD, though it’s not entirely clear which bugs are under attack. (Dark Reading, SecurityWeek)

Ivanti EPMM exploitation widespread as governments, others targeted

Ivanti released advisories on Jan. 29 for code injection vulnerabilities in the on-premises version of Endpoint Manager Mobile. Researchers warn the activity shows evidence of initial access brokers preparing for future attacks. (Cybersecurity Dive)

New “ZeroDayRAT” spyware kit enables total compromise of iOS, Android devices

Once installed, capabilities include victim and device profiling, including model, OS, country, lock status, SIM and carrier info, dual SIM phone numbers, app usage broken down by time, preview of recent SMS messages, and more. (SecurityWeek)

European Commission probes intrusion into staff mobile management backend

Brussels is digging into a cyber break-in that targeted the European Commission’s mobile device management systems, potentially giving intruders a peek inside the official phones carried by EU staff. (The Register)

Humans of Talos: Ryan Liles, master of technical diplomacy

Amy chats with Ryan Liles, who bridges the gap between Cisco’s product teams and the third-party testing labs that put Cisco products through their paces. Hear how speaking up has helped him reshape industry standards and create strong relationships in the field.

Knife Cutting the Edge: Disclosing a China-nexus gateway-monitoring AitM framework

Cisco Talos uncovered “DKnife,” a fully featured gateway-monitoring and adversary-in-the-middle (AitM) framework comprising seven Linux-based implants that perform deep-packet inspection, manipulate traffic, and deliver malware via routers and edge devices.

Talos Takes: Ransomware chills and phishing heats up

Amy is joined by Dave Liebenberg, Strategic Analysis Team Lead, to break down Talos IR’s Q4 trends. What separates organizations that successfully fend off ransomware from those that don’t? What were the top threats facing organizations? Can we (pretty please) get a sneak peek into the 2025 Year in Review?

SHA256: 41f14d86bcaf8e949160ee2731802523e0c76fea87adf00ee7fe9567c3cec610

MD5: 85bbddc502f7b10871621fd460243fbc

Talos Rep: https://talosintelligence.com/talos_file_reputation?s=41f14d86bcaf8e949160ee2731802523e0c76fea87adf00ee7fe9567c3cec610

Example Filename: 85bbddc502f7b10871621fd460243fbc.exe

Detection Name: W32.41F14D86BC-100.SBX.TG

SHA256: a31f222fc283227f5e7988d1ad9c0aecd66d58bb7b4d8518ae23e110308dbf91

MD5: 7bdbd180c081fa63ca94f9c22c457376

Talos Rep: https://talosintelligence.com/talos_file_reputation?s=a31f222fc283227f5e7988d1ad9c0aecd66d58bb7b4d8518ae23e110308dbf91

Example Filename: d4aa3e7010220ad1b458fac17039c274_62_Exe.exe

Detection Name: Win.Dropper.Miner::95.sbx.tg**

SHA256: 9f1f11a708d393e0a4109ae189bc64f1f3e312653dcf317a2bd406f18ffcc507

MD5: 2915b3f8b703eb744fc54c81f4a9c67f

Talos Rep: https://talosintelligence.com/talos_file_reputation?s=9f1f11a708d393e0a4109ae189bc64f1f3e312653dcf317a2bd406f18ffcc507

Example Filename: VID001.exe

Detection Name: Win.Worm.Coinminer::1201

SHA256: 90b1456cdbe6bc2779ea0b4736ed9a998a71ae37390331b6ba87e389a49d3d59

MD5: c2efb2dcacba6d3ccc175b6ce1b7ed0a

Talos Rep: https://talosintelligence.com/talos_file_reputation?s=90b1456cdbe6bc2779ea0b4736ed9a998a71ae37390331b6ba87e389a49d3d59

Example Filename: d4aa3e7010220ad1b458fac17039c274_64_Dll.dll

Detection Name: Auto.90B145.282358.in02

SHA256: 96fa6a7714670823c83099ea01d24d6d3ae8fef027f01a4ddac14f123b1c9974

MD5: aac3165ece2959f39ff98334618d10d9

Talos Rep: https://talosintelligence.com/talos_file_reputation?s=96fa6a7714670823c83099ea01d24d6d3ae8fef027f01a4ddac14f123b1c9974

Example Filename: d4aa3e7010220ad1b458fac17039c274_63_Exe.exe

Detection Name: W32.Injector:Gen.21ie.1201

SHA256: 38d053135ddceaef0abb8296f3b0bf6114b25e10e6fa1bb8050aeecec4ba8f55

MD5: 41444d7018601b599beac0c60ed1bf83

Talos Rep: https://talosintelligence.com/talos_file_reputation?s=38d053135ddceaef0abb8296f3b0bf6114b25e10e6fa1bb8050aeecec4ba8f55

Example Filename: content.js Detection Name: W32.38D053135D-95.SBX.TG

Cisco Talos Blog – Read More

The Olympic Games are more than just a massive celebration of sports; they’re a high-stakes business. Officially, the projected economic impact of the Winter Games — which kicked off on February 6 in Italy — is estimated at 5.3 billion euros. A lion’s share of that revenue is expected to come from fans flocking in from around the globe, with over 2.5 million tourists predicted to visit Italy. Meanwhile, those staying home are tuning in via TV and streaming. According to the platforms, viewership ratings are already hitting their highest peaks since 2014.

But while athletes are grinding for medals and the world is glued to every triumph and heartbreak, a different set of “competitors” has entered the arena to capitalize on the hype and the trust of eager fans. Cyberscammers of all stripes have joined an illegal race for the gold, knowing full well that a frenzy is a fraudster’s best friend.

Kaspersky experts have tracked numerous fraudulent schemes targeting fans during these Winter Games. Here is how to avoid frustration in the form of fake tickets, non-existent merch, and shady streams, so you can keep your cash and personal data safe.

The most popular scam on this year’s circuit is the sale of non-existent tickets. Usually, there are far fewer seats at the rinks and slopes than there are fans dying to see the main events. In a supply-and-demand crunch, people scramble for any chance to snag those coveted passes, and that’s when phishing sites — clones of official vendors — come to the “rescue”. Using these, bad actors fish for fans’ payment details to either resell them on the dark web or drain their accounts immediately.

Remember: tickets for any Olympic event are sold only through the authorized Olympic platform or its listed partners. Any third-party site or seller outside the official channel is a scammer. We’re putting that play in the penalty box!

Dreaming of a Sydney Sweeney — sorry, Sidney Crosby — jersey? Or maybe you want a tracksuit with the official Games logo? Scammers have already set up dozens of fake online stores just for you! To pull off the heist, they use official logos, convincing photos, and padded rave reviews. You pay, and in return, you get… well, nothing but a transaction alert and your card info stolen.

What if you prefer watching the action from the comfort of your couch rather than trekking from stadium to stadium, but you’re not exactly thrilled about paying for a pricey streaming subscription? Maybe there’s a free stream out there?

Sure thing! Five seconds of searching and your screen is flooded with dozens of “cheap”, “exclusive”, or even “free” live streams. They’ve got everything from figure skating to curling. But there’s a catch: for some reason — even though it’s supposedly free — a pop-up appears asking for your credit card details.

You type them in, hit “Play”, but instead of the long-awaited free skate program, you end up on a webcam ad site or somewhere even sketchier. The result: no show for you. At best, you were just used for traffic arbitrage; at worst, they now have access to your bank account. Either way, it’s a major bummer.

Scammers have been playing sports fans for years, and their payday depends entirely on how well they can mimic official portals. To stay safe, fans should mount a tiered defense: install reliable security software to block phishing, keep a sharp eye on every URL you visit, and if something feels even slightly off, never, ever enter your personal or payment info.

Want to see how sports fans were targeted in the past? Check out our previous posts:

Kaspersky official blog – Read More

Cyble Research and Intelligence Labs (CRIL) observed large-scale, systematic exposure of ChatGPT API keys across the public internet. Over 5,000 publicly accessible GitHub repositories and approximately 3,000 live production websites were found leaking API keys through hardcoded source code and client-side JavaScript.

GitHub has emerged as a key discovery surface, with API keys frequently committed directly into source files or stored in configuration and .env files. The risk is further amplified by public-facing websites that embed active keys in front-end assets, leading to persistent, long-term exposure in production environments.

CRIL’s investigation further revealed that several exposed API keys were referenced in discussions mentioning the Cyble Vision platform. The exposure of these credentials significantly lowers the barrier for threat actors, enabling faster downstream abuse and facilitating broader criminal exploitation.

These findings underscore a critical security gap in the AI adoption lifecycle. AI credentials must be treated as production secrets and protected with the same rigor as cloud and identity credentials to prevent ongoing financial, operational, and reputational risk.

AI API keys are production secrets, not developer conveniences. Treating them casually is creating a new class of silent, high-impact breaches.

Richard Sands, CISO, Cyble

“The AI Era Has Arrived — Security Discipline Has Not”

We are firmly in the AI era. From chatbots and copilots to recommendation engines and automated workflows, artificial intelligence is no longer experimental. It is production-grade infrastructure with end-to-end workflows and pipelines. Modern websites and applications increasingly rely on large language models (LLMs), token-based APIs, and real-time inference to deliver capabilities that were unthinkable just a few years ago.

This rapid adoption has also given rise to a development culture often referred to as “vibe coding.” Developers, startups, and even enterprises are prioritizing speed, experimentation, and feature delivery over foundational security practices. While this approach accelerates innovation, it also introduces systemic weaknesses that attackers are quick to exploit.

One of the most prevalent and most dangerous of these weaknesses is the widespread exposure of hardcoded AI API keys across both source code repositories and production websites.

A rapidly expanding digital risk surface is likely to increase the likelihood of compromise; a preventive strategy is the best approach to avoid it. Cyble Vision provides users with insight into exposures across the surface, deep, and dark web, generating real-time alerts for them to view and take action.

SOC teams will be able to leverage this data to remediate compromised credentials and their associated endpoints. With Threat Actors potentially weaponizing these credentials to carry out malicious activities (which will then be attributed to the affected user(s)), proactive intelligence is paramount to keeping one’s digital risk surface secure.

“Tokens are the new passwords — they are being mishandled.”

AI platforms use token-based authentication. API keys act as high-value secrets that grant access to inference capabilities, billing accounts, usage quotas, and, in some cases, sensitive prompts or application behavior. From a security standpoint, these keys are equivalent to privileged credentials.

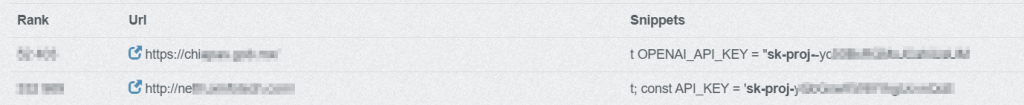

Despite this, ChatGPT API keys are frequently embedded directly in JavaScript files, front-end frameworks, static assets, and configuration files accessible to end users. In many cases, keys are visible through browser developer tools, minified bundles, or publicly indexed source code. An example of the keys hardcoded in popular reputable websites is shown below (see Figure 1)

This reflects a fundamental misunderstanding: API keys are being treated as configuration values rather than as secrets. In the AI era, that assumption is dangerously outdated. In some cases, this happens unintentionally, while in others, it’s a deliberate trade-off that prioritizes speed and convenience over security.

When API keys are exposed publicly, attackers do not need to compromise infrastructure or exploit vulnerabilities. They simply collect and reuse what is already available.

CRIL has identified multiple publicly accessible websites and GitHub Repositories containing hardcoded ChatGPT API keys embedded directly within client-side code. These keys are exposed to any user who inspects network requests or application source files.

A commonly observed pattern resembles the following:

```javascript

const OPENAI_API_KEY = "sk-proj-XXXXXXXXXXXXXXXXXXXXXXXX";

```

```javascript

const OPENAI_API_KEY = "sk-svcacct-XXXXXXXXXXXXXXXXXXXXXXXX";

```

The prefix “sk-proj-“ typically represents a project-scoped secret key associated with a specific project environment, inheriting its usage limits and billing configuration. The “sk-svcacct-“ prefix generally denotes a service account–based key intended for automated backend services or system integrations.

Regardless of type, both keys function as privileged authentication tokens that enable direct access to AI inference services and billing resources. When embedded in client-side code, they are fully exposed and can be immediately harvested and misused by threat actors.

Public GitHub repositories have emerged as one of the most reliable discovery surfaces for exposed ChatGPT API keys. During development, testing, and rapid prototyping, developers frequently hardcode OpenAI credentials into source code, configuration files, or .env files—often with the intent to remove or rotate them later. In practice, these secrets persist in commit history, forks, and archived repositories.

CRIL analysis identified over 5,000 GitHub repositories containing hardcoded OpenAI API keys. These exposures span JavaScript applications, Python scripts, CI/CD pipelines, and infrastructure configuration files. In many cases, the repositories were actively maintained or recently updated, increasing the likelihood that the exposed keys were still valid at the time of discovery.

Notably, the majority of exposed keys were configured to access widely used ChatGPT models, making them particularly attractive for abuse. These models are commonly integrated into production workflows, increasing both their exposure rate and their value to threat actors.

Once committed to GitHub, API keys can be rapidly indexed by automated scanners that monitor new commits and repository updates in near real time. This significantly reduces the window between exposure and exploitation, often to hours or even minutes.

Beyond source code repositories, CRIL observed widespread exposure of ChatGPT API keys directly within production websites. In these cases, API keys were embedded in client-side JavaScript bundles, static assets, or front-end framework files, making them accessible to any user inspecting the application.

CRIL identified approximately 3,000 public-facing websites exposing ChatGPT API keys in this manner. Unlike repository leaks, which may be removed or made private, website-based exposures often persist for extended periods, continuously leaking secrets to both human users and automated scrapers.

These implementations frequently invoke ChatGPT APIs directly from the browser, bypassing backend mediation entirely. As a result, exposed keys are not only visible but actively used in real time, making them trivial to harvest and immediately abuse.

As with GitHub exposures, the most referenced models were highly prevalent ChatGPT variants used for general-purpose inference, indicating that these keys were tied to live, customer-facing functionality rather than isolated testing environments. These models strike a balance between capability and cost, making them ideal for high-volume abuse such as phishing content generation, scam scripts, and automation at scale.

Hard-coding LLM API keys risks turning innovation into liability, as attackers can drain AI budgets, poison workflows, and access sensitive prompts and outputs. Enterprises must manage secrets and monitor exposure across code and pipelines to prevent misconfigurations from becoming financial, privacy, or compliance issues.

Kautubh Medhe, CPO, Cyble

Threat actors continuously monitor public websites, GitHub repositories, forks, gists, and exposed JavaScript bundles to identify high-value secrets, including OpenAI API keys. Once discovered, these keys are rapidly validated through automated scripts and immediately operationalized for malicious use.

Compromised keys are typically abused to:

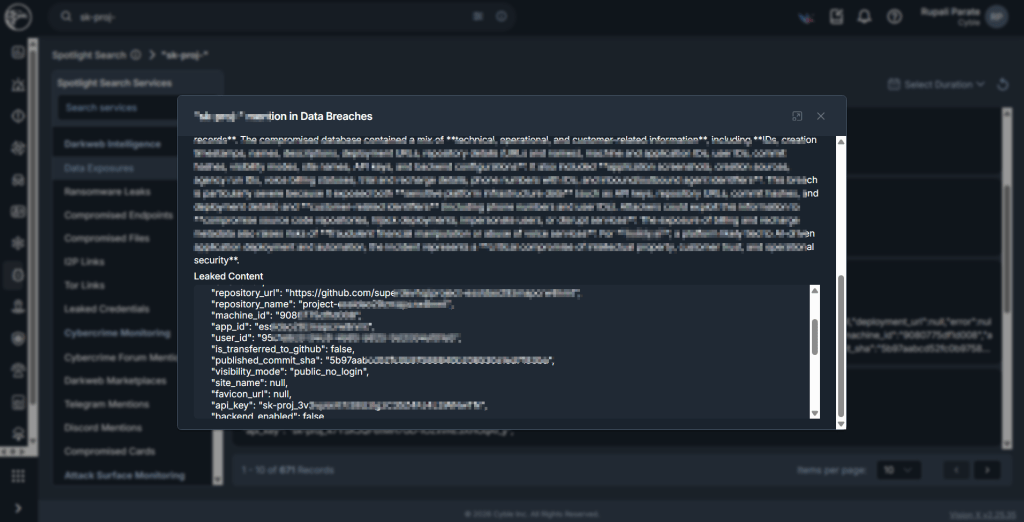

In certain cases, CRIL, using Cyble Vision, also identified several of these keys that originated from exposures and were subsequently leaked, as noted in our spotlight mentions. (see Figure 2 and Figure 3)

Unlike traditional conventions, AI API activity is often not integrated into centralized logging, SIEM monitoring, or anomaly detection frameworks. As a result, malicious usage can persist undetected until organizations encounter billing spikes, quota exhaustion, degraded service performance, or operational disruptions.

The exposure of ChatGPT API keys across thousands of websites and tens of thousands of GitHub repositories highlights a systemic security blind spot in the AI adoption lifecycle. These credentials are actively harvested, rapidly abused, and difficult to trace once compromised.

As AI becomes embedded in business-critical workflows, organizations must abandon the perception that AI integrations are experimental or low risk. AI credentials are production secrets and must be protected accordingly.

Failure to secure them will continue to expose organizations to financial loss, operational disruption, and reputational damage.

SOC teams should take the initiative to proactively monitor for exposed endpoints using monitoring tools such as Cyble Vision, which provides users with real-time alerts and visibility into compromised endpoints.

This, in turn, allows them to take corrective action to identify which endpoints and credentials were compromised and secure any compromised endpoints as soon as possible.

Eliminate Secrets from Client-Side Code

AI API keys must never be embedded in JavaScript or front-end assets. All AI interactions should be routed through secure backend services.

Enforce GitHub Hygiene and Secret Scanning

Apply Least Privilege and Usage Controls

Implement Secure Key Management Practices

Monitor AI Usage Like Cloud Infrastructure

Establish baselines for normal AI API usage and alert on anomalies such as spikes, unusual geographies, or unexpected model usage.

The post When AI Secrets Go Public: The Rising Risk of Exposed ChatGPT API Keys appeared first on Cyble.

Cyble – Read More