How my smart home became my best defense against brutal spring allergies – and pollen

I’ve tested dozens of ‘allergy-friendly’ smart gadgets over the past year, and these six are the most effective.

Latest news – Read More

I’ve tested dozens of ‘allergy-friendly’ smart gadgets over the past year, and these six are the most effective.

Latest news – Read More

Across the Talos 2025 Year in Review, state-sponsored threat activity from China, Russia, North Korea, and Iran all had varying motivations, such as espionage, disruption, financial gain, and geopolitical influence.

But when you look at how these operations actually unfold, similar tactics, techniques, and procedures (TTPs) keep appearing: access through vulnerabilities and identity, and access that remains under the radar for a considerable period of time.

Here are the dominant themes from the state-sponsored section of the Talos Year in Review, available now.

China-nexus threat activity stood out this year for both volume and efficiency, with Talos investigations increasing by nearly 75% compared to 2024.

Newly disclosed vulnerabilities were exploited almost immediately (e.g., ToolShell), sometimes before patches were widely available. At the same time, long-standing, unpatched vulnerabilities in networking devices and widely used software continued to provide reliable entry points for these types of adversary.

Once inside, the focus shifts to persistence. Web shells, custom backdoors, tunneling tools, and credential harvesting all support long-term access.

There’s also more overlap than ever before between state-sponsored and financially motivated activity. It is likely that in some cases, state-sponsored actors conducted operations for personal profit alongside espionage-focused missions, while in others, cybercriminals collected valuable information during an attack that could be sold to espionage-motivated actors for further exploitation, providing them dual revenue streams.

Russian-linked cyber activity remains closely tied to their geopolitical objectives, particularly the war in Ukraine.

Many operations continue to rely on unpatched, older vulnerabilities (especially in networking devices) to gain initial access. These flaws provide a dependable way in for adversaries and support long-term intelligence gathering.

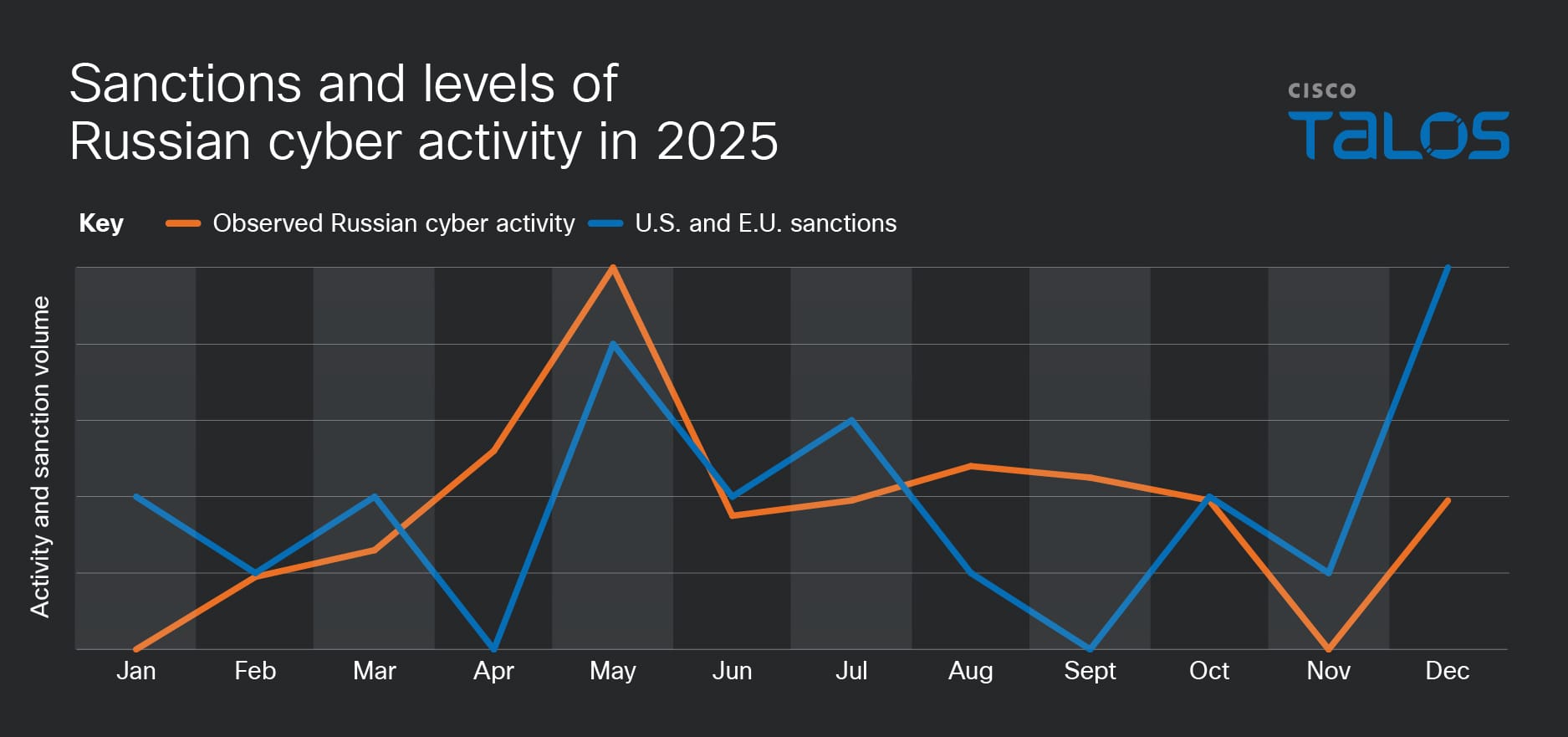

Russia’s offensive cyber activity is highly correlated with developments in the larger geopolitical sphere. For example, the announcement of sanctions intended to apply pressure on Russia by both the U.S. and E.U. often corresponded with our observed levels of Russian cyber activity.

Common malware families like Dark Crystal RAT (DCRAT), Remcos RAT, and Smoke Loader appeared frequently in Talos investigations on operations against Ukraine in 2025. These families aren’t exclusive to Russia-nexus threat actors, but they continue to be effective in environments where patching and visibility are inconsistent, and should therefore be high priority targets for defense and monitoring.

North Korea cyber operations leaned heavily into social engineering and insider access in 2025. These operations were both for financial and espionage purposes.

Campaigns like Contagious Interview (orchestrated by Famous Chollima) used fake recruiters from legitimate companies to socially engineering targets to execute code or hand over credentials. From there, actors stole cryptocurrency, exfiltrated data, and established persistent access.

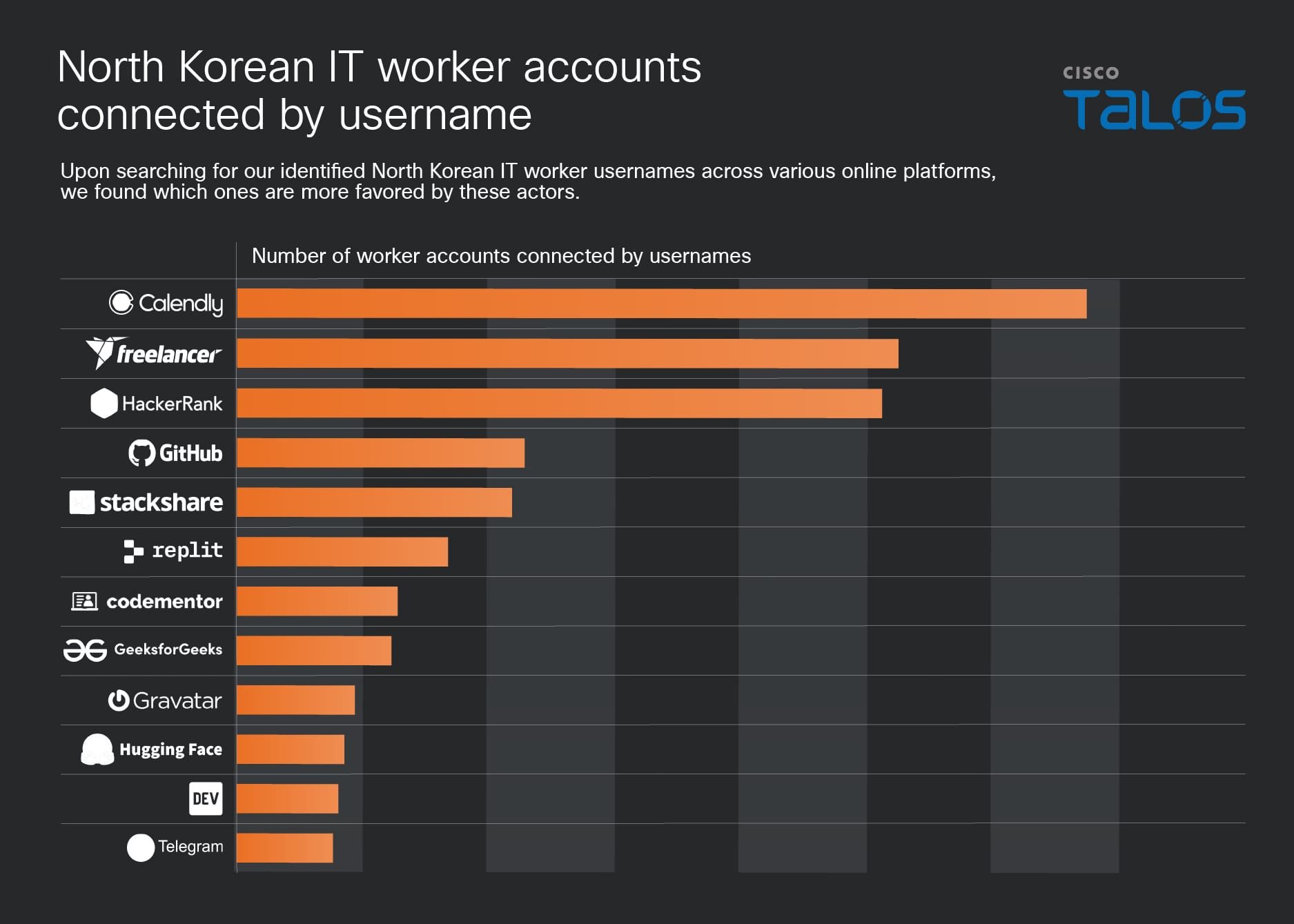

North Korean cyber actors also pulled off the largest cryptocurrency heist in history in 2025, stealing $1.5 billion. Additionally, thousands of IT workers used stolen identities and AI-generated profiles to secure positions at Fortune 500 companies, generating billions in annual revenue for North Korea’s nuclear weapons and ballistic missiles programs.

Iranian cyber threat activity in 2025 combined visible disruption with long-term access.

Hacktivist operations increased by 60% in response to geopolitical events, particularly the Israel-Hamas conflict. These campaigns, which include distributed denial-of-service (DDoS) attacks, defacements, and other disruptive operations, are often designed to generate attention and shape narratives.

At the same time, more traditional advanced persistent threat (APT) activity focused on persistence. Groups such as ShroudedSnooper targeted sectors like telecommunications, using custom compact backdoors designed to blend into normal traffic and remain undetected.

ShroudedSnooper is an APT that public reporting widely attributes to Iran’s Ministry of Intelligence and Security (MOIS). It is very likely an initial access group that passes operations off to secondary threat actors for long term espionage or destructive attacks.

For current threat intelligence related to the developing conflict in Iran, follow our coverage on the Talos blog.

Though the state-sponsored activity that we tracked for the Talos Year in Review have different objectives, they still have the same reliance on gaining and maintaining access. The following guidance is recommended for security teams:

Cisco Talos Blog – Read More

The JBL Live 780NC are a home run for midrange headphones, offering premium features in a sub-$300 package.

Latest news – Read More

CISOs face a shrinking window to prepare as AI models like Mythos collapse the gap between vulnerability discovery and exploitation, driving a new era of high-velocity cyberattacks.

The post ‘Mythos-Ready’ Security: CSA Urges CISOs to Prepare for Accelerated AI Threats appeared first on SecurityWeek.

SecurityWeek – Read More

The software industry is racing to write code with artificial intelligence. It is struggling, badly, to make sure that code holds up once it ships.

A survey of 200 senior site-reliability and DevOps leaders at large enterprises across the United States, United Kingdom, and European Union paints a stark picture of the hidden costs embedded in the AI coding boom. According to Lightrun’s 2026 State of AI-Powered Engineering Report, shared exclusively with VentureBeat ahead of its public release, 43% of AI-generated code changes require manual debugging in production environments even after passing quality assurance and staging tests. Not a single respondent said their organization could verify an AI-suggested fix with just one redeploy cycle; 88% reported needing two to three cycles, while 11% required four to six.

The findings land at a moment when AI-generated code is proliferating across global enterprises at a breathtaking pace. Both Microsoft CEO Satya Nadella and Google CEO Sundar Pichai have claimed that around a quarter of their companies’ code is now AI-generated. The AIOps market — the ecosystem of platforms and services designed to manage and monitor these AI-driven operations — stands at $18.95 billion in 2026 and is projected to reach $37.79 billion by 2031.

Yet the report suggests the infrastructure meant to catch AI-generated mistakes is badly lagging behind AI’s capacity to produce them.

“The 0% figure signals that engineering is hitting a trust wall with AI adoption,” said Or Maimon, Lightrun’s chief business officer, referring to the survey’s finding that zero percent of engineering leaders described themselves as “very confident” that AI-generated code will behave correctly once deployed. “While the industry’s emphasis on increased productivity has made AI a necessity, we are seeing a direct negative impact. As AI-generated code enters the system, it doesn’t just increase volume; it slows down the entire deployment pipeline.”

The dangers are no longer theoretical. In early March 2026, Amazon suffered a series of high-profile outages that underscored exactly the kind of failure pattern the Lightrun survey describes. On March 2, Amazon.com experienced a disruption lasting nearly six hours, resulting in 120,000 lost orders and 1.6 million website errors. Three days later, on March 5, a more severe outage hit the storefront — lasting six hours and causing a 99% drop in U.S. order volume, with approximately 6.3 million lost orders. Both incidents were traced to AI-assisted code changes deployed to production without proper approval.

The fallout was swift. Amazon launched a 90-day code safety reset across 335 critical systems, and AI-assisted code changes must now be approved by senior engineers before they are deployed.

Maimon pointed directly to the Amazon episodes. “This uncertainty isn’t based on a hypothesis,” he said. “We just need to look back to the start of March, when Amazon.com in North America went down due to an AI-assisted change being implemented without established safeguards.”

The Amazon incidents illustrate the central tension the Lightrun report quantifies in survey data: AI tools can produce code at unprecedented speed, but the systems designed to validate, monitor, and trust that code in live environments have not kept pace. Google’s own 2025 DORA report corroborates this dynamic, finding that AI adoption correlates with an increase in code instability, and that 30% of developers report little or no trust in AI-generated code.

Maimon cited that research directly: “Google’s 2025 DORA report found that AI adoption correlates with an almost 10% increase in code instability. Our validation processes were built for the scale of human engineering, but today, engineers have become auditors for massive volumes of unfamiliar code.”

One of the report’s most striking findings is the scale of human capital being consumed by AI-related verification work. Developers now spend an average of 38% of their work week — roughly two full days — on debugging, verification, and environment-specific troubleshooting, according to the survey. For 88% of the companies polled, this “reliability tax” consumes between 26% and 50% of their developers’ weekly capacity.

This is not the productivity dividend that enterprise leaders expected when they invested in AI coding assistants. Instead, the engineering bottleneck has simply migrated. Code gets written faster, but it takes far longer to confirm that it works.

“In some senses, AI has made the debugging problem worse,” Maimon said. “The volume of change is overwhelming human validation, while the generated code itself frequently does not behave as expected when deployed in Production. AI coding agents cannot see how their code behaves in running environments.”

The redeploy problem compounds the time drain. Every surveyed organization requires multiple deployment cycles to verify a single AI-suggested fix — and according to Google’s 2025 DORA report, a single redeploy cycle takes a day to one week on average. In regulated industries such as healthcare and finance, deployment windows are often narrow, governed by mandated code freezes and strict change-management protocols. Requiring three or more cycles to validate a single AI fix can push resolution timelines from days to weeks.

Maimon rejected the idea that these multiple cycles represent prudent engineering discipline. “This is not discipline, but an expensive bottleneck and a symptom of the fact that AI-generated fixes are often unreliable,” he said. “If we can move from three cycles to one, we reclaim a massive portion of that 38% lost engineering capacity.”

If the productivity drain is the most visible cost, the Lightrun report argues the deeper structural problem is what it calls “the runtime visibility gap” — the inability of AI tools and existing monitoring systems to observe what is actually happening inside running applications.

Sixty percent of the survey’s respondents identified a lack of visibility into live system behavior as the primary bottleneck in resolving production incidents. In 44% of cases where AI SRE or application performance monitoring tools attempted to investigate production issues, they failed because the necessary execution-level data — variable states, memory usage, request flow — had never been captured in the first place.

The report paints a picture of AI tools operating essentially blind in the environments that matter most. Ninety-seven percent of engineering leaders said their AI SRE agents operate without significant visibility into what is actually happening in production. Approximately half of all companies (49%) reported their AI agents have only limited visibility into live execution states. Only 1% reported extensive visibility, and not a single respondent claimed full visibility.

This is the gap that turns a minor software bug into a costly outage. When an AI-suggested fix fails in production — as 43% of them do — engineers cannot rely on their AI tools to diagnose the problem, because those tools cannot observe the code’s real-time behavior. Instead, teams fall back on what the report calls “tribal knowledge”: the institutional memory of senior engineers who have seen similar problems before and can intuit the root cause from experience rather than data. The survey found that 54% of resolutions to high-severity incidents rely on tribal knowledge rather than diagnostic evidence from AI SREs or APMs.

The trust deficit plays out with particular intensity in the finance sector. In an industry where a single application error can cascade into millions of dollars in losses per minute, the survey found that 74% of financial-services engineering teams rely on tribal knowledge over automated diagnostic data during serious incidents — far higher than the 44% figure in the technology sector.

“Finance is a heavily regulated, high-stakes environment where a single application error can cost millions of dollars per minute,” Maimon said. “The data shows that these teams simply do not trust AI not to make a dangerous mistake in their Production environments. This is a rational response to tool failure.”

The distrust extends beyond finance. Perhaps the most telling data point in the entire report is that not a single organization surveyed — across any industry — has moved its AI SRE tools into actual production workflows. Ninety percent remain in experimental or pilot mode. The remaining 10% evaluated AI SRE tools and chose not to adopt them at all. This represents an extraordinary gap between market enthusiasm and operational reality: enterprises are spending aggressively on AI for IT operations, but the tools they are buying remain quarantined from the environments where they would deliver the most value.

Maimon described this as one of the report’s most significant revelations. “Leaders are eager to adopt these new AI tools, but they don’t trust AI to touch live environments,” he said. “The lack of trust is shown in the data; 98% have lower trust in AI operating in production than in coding assistants.”

The findings raise pointed questions about the current generation of observability tools from major vendors like Datadog, Dynatrace, and Splunk. Seventy-seven percent of the engineering leaders surveyed reported low or no confidence that their current observability stack provides enough information to support autonomous root cause analysis or automated incident remediation.

Maimon did not shy away from naming the structural problem. “Major vendors often build ‘closed-garden’ ecosystems where their AI SREs can only reason over data collected by their own proprietary agents,” he said. “In a modern enterprise, teams typically have a multi-tool stack to provide full coverage. By forcing a team into a single-vendor silo, these tools create an uncomfortable dependency and a strategic liability: if the vendor’s data coverage is missing a specific layer, the AI is effectively blind to the root cause.”

The second issue, Maimon argued, is that current observability-backed AI SRE solutions offer only partial visibility — defined by what engineers thought to log at the time of deployment. Because failures rarely follow predefined paths, autonomous root cause analysis using only these tools will frequently miss the key diagnostic evidence. “To move toward true autonomous remediation,” he said, “the industry must shift toward AI SRE without vendor lock-in; AI SREs must be an active participant that can connect across the entire stack and interrogate live code to capture the ground truth of a failure as it happens.”

When asked what it would take to trust AI SREs, the survey’s respondents coalesced unanimously around live runtime visibility. Fifty-eight percent said they need the ability to provide “evidence traces” of variables at the point of failure, and 42% cited the ability to verify a suggested fix before it actually deploys. No respondents selected the ability to ingest multiple log sources or provide better natural language explanations — suggesting that engineering leaders do not want AI that talks better, but AI that can see better.

The survey was administered by Global Surveyz Research, an independent firm, and drew responses from Directors, VPs, and C-level executives in SRE and DevOps roles at enterprises with 1,500 or more employees across the finance, technology, and information technology sectors. Responses were collected during January and February 2026, with questions randomized to prevent order bias.

Lightrun, which is backed by $110 million in funding from Accel and Insight Partners and counts AT&T, Citi, Microsoft, Salesforce, and UnitedHealth Group among its enterprise clients, has a clear commercial interest in the problem the report describes: the company sells a runtime observability platform designed to give AI agents and human engineers real-time visibility into live code execution. Its AI SRE product uses a Model Context Protocol connection to generate live diagnostic evidence at the point of failure without requiring redeployment. That commercial interest does not diminish the survey’s findings, which align closely with independent research from Google DORA and the real-world evidence of the Amazon outages.

Taken together, they describe an industry confronting an uncomfortable paradox. AI has solved the slowest part of building software — writing the code — only to reveal that writing was never the hard part. The hard part was always knowing whether it works. And on that question, the engineers closest to the problem are not optimistic.

“If the live visibility gap is not closed, then teams are really just compounding instability through their adoption of AI,” Maimon said. “Organizations that don’t bridge this gap will find themselves stuck with long redeploy loops, to solve ever more complex challenges. They will lose their competitive speed to the very AI tools that were meant to provide it.”

The machines learned to write the code. Nobody taught them to watch it run.

Security | VentureBeat – Read More

ViperTunnel is a Python-based backdoor linked to DragonForce ransomware that targets businesses using Windows servers across the US and the UK.

Hackread – Cybersecurity News, Data Breaches, AI and More – Read More

The parser is meant to mitigate the entire class of memory safety bugs in the low-level environment.

The post Google Adds Rust DNS Parser to Pixel Phones for Better Security appeared first on SecurityWeek.

SecurityWeek – Read More

The sprawling cybercrime operation abuses major providers to prevent takedowns and distance itself from sanctions.

The post Triad Nexus Evades Sanctions to Fuel Cybercrime appeared first on SecurityWeek.

SecurityWeek – Read More

Modern phishing campaigns increasingly abuse legitimate services. Cloud platforms, file-sharing tools, trusted domains, and widely used SaaS applications are now part of the attacker’s toolkit. Instead of breaking trust, attackers borrow it.

This shift creates a dangerous asymmetry. Security controls often whitelist or inherently trust these services, while users are far less likely to question them. The result is a smoother path from inbox to infection.

According to ANY.RUN’s annual Malware Trends Report for 2025, phishing driven by multi-stage redirect chains and trusted-cloud hosting has become the dominant attack vector, with RATs and backdoors rising 28% and 68% respectively. The abuse of legitimate platforms has made traditional reputation-based filtering fundamentally unreliable.

Early detection is no longer simply a technical performance metric. It is a business continuity imperative. When threats hide inside trusted infrastructure, the window between initial infection and serious organizational impact can be measured in hours, not days. Security teams that cannot identify and contain an attack in its earliest stages — before the payload executes, before the C2 channel is established, before the attacker pivots deeper into the network — face an exponentially harder response challenge.

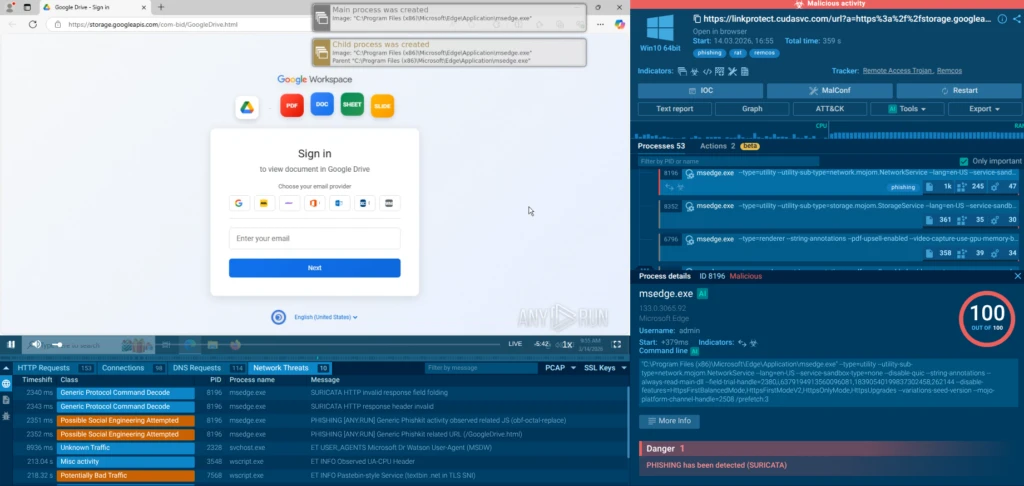

In April 2026, ANY.RUN’s threat research team identified a sophisticated multi-stage phishing campaign that perfectly exemplifies this new breed of attack. The campaign abuses Google Cloud Storage to host HTML phishing pages themed as Google Drive document viewers, ultimately delivering the Remcos Remote Access Trojan (RAT).

View the attack in real time in a live sandbox session

The attackers parked their phishing pages on a legitimate, widely-trusted Google domain. This single architectural choice allowed the campaign to bypass a wide range of conventional email security gateways and web filtering tools.

Convincing Google Drive-themed phishing pages are hosted on storage.googleapis.com subdomains such as pa-bids, com-bid, contract-bid-0, in-bids, and out-bid. Examples include URLs like hxxps://storage[.]googleapis[.]com/com-bid/GoogleDrive.html. These pages mimic legitimate Google Workspace sign-in flows, complete with branded logos, file-type icons (PDF, DOC, SHEET, SLIDE), and prompts to “Sign in to view document in Google Drive.”

The pages are crafted to harvest full account credentials: email address, password, and one-time passcode. But the credential theft is just the opening act. After a “successful login,” the page prompts the download of a file named Bid-Packet-INV-Document.js, which serves as the entry point for the malware delivery chain.

The delivery chain is deliberately complex and layered to evade detection at every stage:

1. Phishing Email Delivery. Because the sending domain and the linked domain are both associated with legitimate Google infrastructure, the email passes standard DMARC, SPF, and DKIM authentication checks, and is not flagged by reputation-based email filters.

2. Fake Google Drive Login Page. The googleapis.com link opens a convincing replica of the Google Drive interface, prompting the victim to authenticate with their email address, password, and one-time passcode. Credentials entered here are captured and exfiltrated to the attacker’s command-and-control infrastructure.

3. Malicious JavaScript Download. The victim is prompted to download Bid-Packet-INV-Document.js, presented as a business document. When executed under Windows Script Host, this JavaScript file contains time-based evasion logic — it can delay execution to avoid sandbox detection environments that analyze behavior within a fixed time window.

4. VBS Chain and Persistence. The JavaScript launches a first VBS stage, which downloads and silently executes a second VBS file. This second stage drops components into %APPDATA%WindowsUpdate (folder name chosen to blend in with legitimate Windows processes) and configures Startup persistence, ensuring the malware survives system reboots.

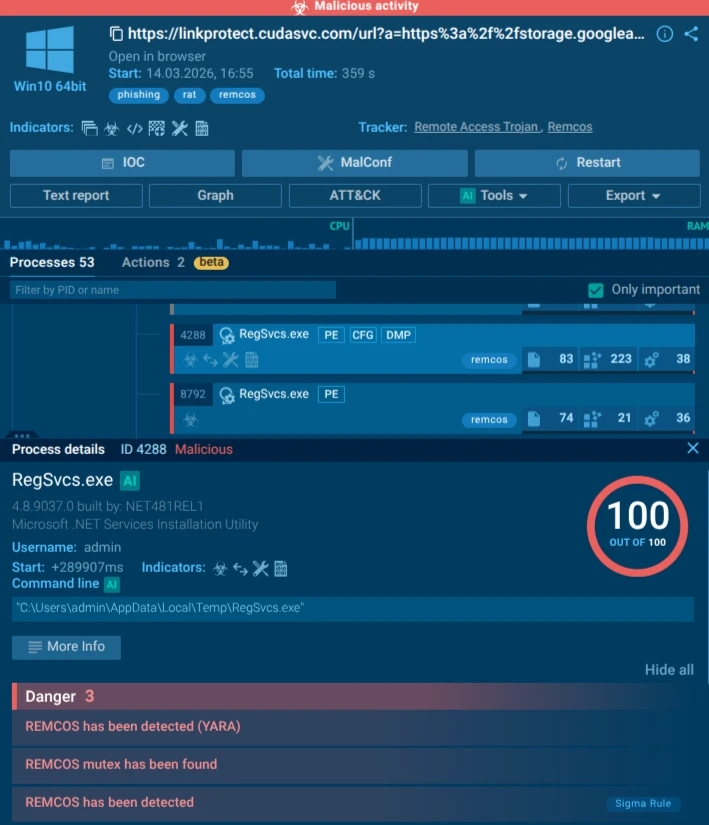

5. PowerShell Orchestration. A PowerShell script (DYHVQ.ps1) then orchestrates the loading of an obfuscated portable executable stored as ZIFDG.tmp, which contains the Remcos RAT payload. To remain stealthy, the chain simultaneously fetches an additional obfuscated .NET loader from Textbin, a text-hosting service, loading it directly in memory via Assembly.Load, leaving no file on disk for traditional antivirus engines to scan.

6. Process Hollowing via RegSvcs.exe. The .NET loader abuses RegSvcs.exe for process hollowing. Because RegSvcs.exe is signed by Microsoft and carries a clean reputation on VirusTotal, its execution appears benign in endpoint logs. The loader creates or starts RegSvcs.exe from %TEMP%, hollowing the process and injecting the Remcos payload into its memory space. The result is a partially fileless Remcos instance: most of the malicious logic executes entirely in memory, never touching the disk in a form that a signature-based scanner would recognize.

7. C2 Establishment. Remcos establishes an encrypted communication channel back to the attacker’s command-and-control server and writes persistence entries into the Windows Registry under HKEY_CURRENT_USERSoftwareRemcos-{ID}, ensuring continued access across reboots. From this point, the attacker has full, persistent, covert control over the compromised endpoint.

ANY.RUN’s sandbox analysis clearly visualizes this chain: wscript.exe spawns multiple VBS and JS scripts, cmd.exe and powershell.exe handle staging, and RegSvcs.exe is flagged for Remcos behavior. The entire process tree demonstrates how attackers chain living-off-the-land binaries (LOLBins) with obfuscation and in-memory execution.

The attack succeeds because it weaponizes trust at every layer. Google Storage provides reputation immunity. RegSvcs.exe is a signed Microsoft binary used for .NET service installation: its clean hash means endpoint protection rarely flags it. Combined with heavy obfuscation, time-based evasion, and fileless techniques, the campaign slips past static analysis and many EDR rules that rely on file reputation or known malicious domains.

At the heart of the final payload is Remcos RAT — a commercially available Remote Access Trojan that has become a favorite among cybercriminals due to its affordability, ease of use, and powerful feature set. It grants attackers full remote control over the compromised system. Capabilities include keylogging, credential harvesting from browsers and password managers, screenshot capture, file upload/download, remote command execution, microphone and webcam access, and clipboard monitoring. It supports persistence mechanisms, anti-analysis tricks, and encrypted C2 communication.

The dangers of Remcos extend far beyond initial access. It serves as a beachhead for further attacks: ransomware deployment, lateral movement across the corporate network, data exfiltration of intellectual property or customer records, and even supply-chain compromise if the infected machine belongs to a vendor. Because it runs in memory inside a trusted process, it can remain undetected for weeks or months, silently harvesting sensitive data.

Enterprises face amplified risk because these campaigns target high-value users (executives, finance teams, and procurement staff) who routinely handle sensitive documents and have elevated privileges. A single successful infection can lead to:

In attacks that exploit legitimate services, the Mean Time to Detect (MTTD) for conventional security tools is dramatically extended. When the initial link is clean, the host domain is trusted, and the payload runs inside a legitimate Microsoft process, the alert chain that SOC teams depend on generates few or no signals. The attacker operates in silence while gathering intelligence, escalating privileges, and expanding their foothold.

Defending against phishing campaigns that abuse legitimate services requires a security capability that operates at the behavioral level — one that can observe what happens after a link is clicked or a file is opened, not just assess whether a URL or hash matches a known-bad list. ANY.RUN’s Enterprise Suite is built precisely for this purpose, and its three core modules address the threat at complementary stages of the detection and response lifecycle.

The foundation of ANY.RUN’s detection capability is its Interactive Sandbox: a cloud-based, fully interactive analysis environment that allows security analysts to safely detonate suspicious files and URLs in real time. Unlike automated sandboxes that analyze behavior passively within a fixed time window, ANY.RUN’s sandbox supports genuine human interaction: analysts can click, type, scroll, and navigate within the isolated virtual machine, triggering behavior that might be blocked by time-delay evasion or anti-automation logic.

In the Google Cloud Storage / Remcos campaign, this capability is decisive. The malicious JavaScript embedded time-based evasion logic is a mechanism designed specifically to defeat automated sandbox analysis. An interactive sandbox can wait out that delay, manually trigger the next stage, and observe the complete execution chain from the initial JS download through the VBS stages, the PowerShell orchestration, the process hollowing via RegSvcs.exe, and the final Remcos C2 callback.

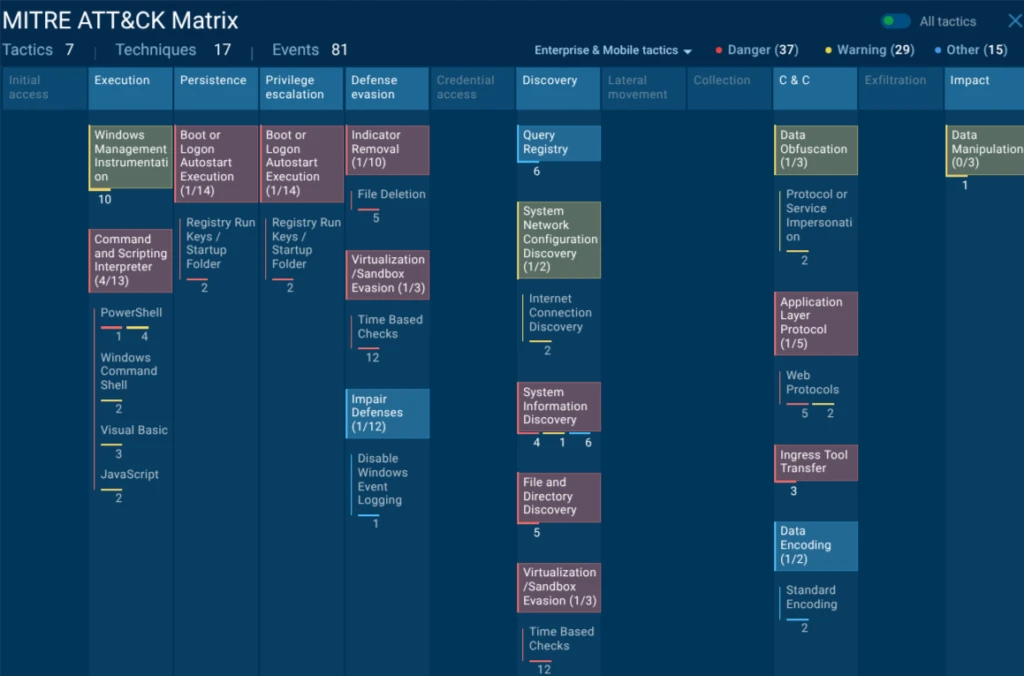

The result is not just a verdict but a full behavioral map: every process spawned, every network connection initiated, every registry key written, every file dropped. This map translates directly into actionable detection logic — MITRE ATT&CK-mapped TTPs, Sigma rules that can be deployed to SIEM and EDR platforms, and concrete IOCs that can be operationalized across the security stack.

For SOC teams, this means the difference between seeing an alert that says ‘suspicious JavaScript file’ and understanding the complete threat: this is Remcos RAT, delivered via process hollowing, with these C2 addresses, using these persistence mechanisms, and these are the detection rules that will catch the next variant.

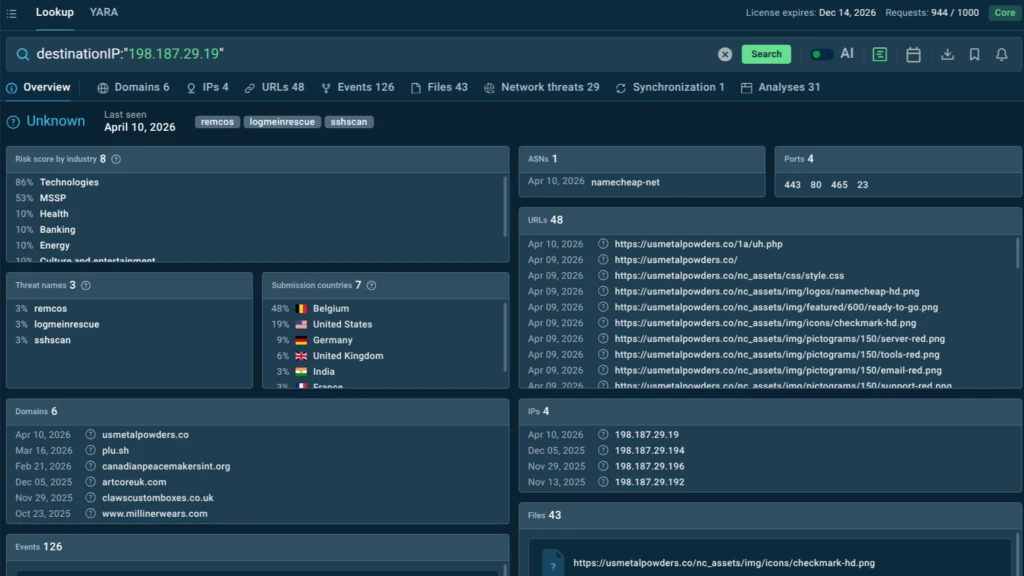

ANY.RUN’s Threat Intelligence Lookup is a searchable, continuously updated database of threat intelligence drawn from real-time malware analysis conducted by a community of over 600,000 cybersecurity professionals and 15,000 organizations worldwide. It functions as a force multiplier for threat hunting and incident response, providing instant enrichment for any indicator — IP address, domain, file hash, URL, or behavioral signature.

In the context of the Google Cloud Storage / Remcos campaign, Threat Intelligence Lookup enables analysts to move rapidly from a single observed indicator to a comprehensive understanding of the campaign’s scope. A C2 IP address flagged by sandbox analysis can be pivoted to reveal all associated Remcos samples in the database, the infrastructure pattern used across the campaign, related file hashes, and behavioral indicators that might be present in other systems.

This pivoting capability is particularly valuable for detecting multi-stage attacks where the initial indicators are clean (a googleapis.com URL, a signed Microsoft binary) but later-stage indicators — C2 domains, specific PowerShell script signatures, anomalous RegSvcs.exe activity — can be correlated against historical data to confirm campaign attribution and expand detection coverage.

For threat hunters, Threat Intelligence Lookup supports proactive campaign identification before an organization is impacted. YARA-based searches, combined with industry and geography filters, allow security teams to identify whether active campaigns are targeting their specific sector and region and to build detection rules based on real-world attacker behavior rather than theoretical models.

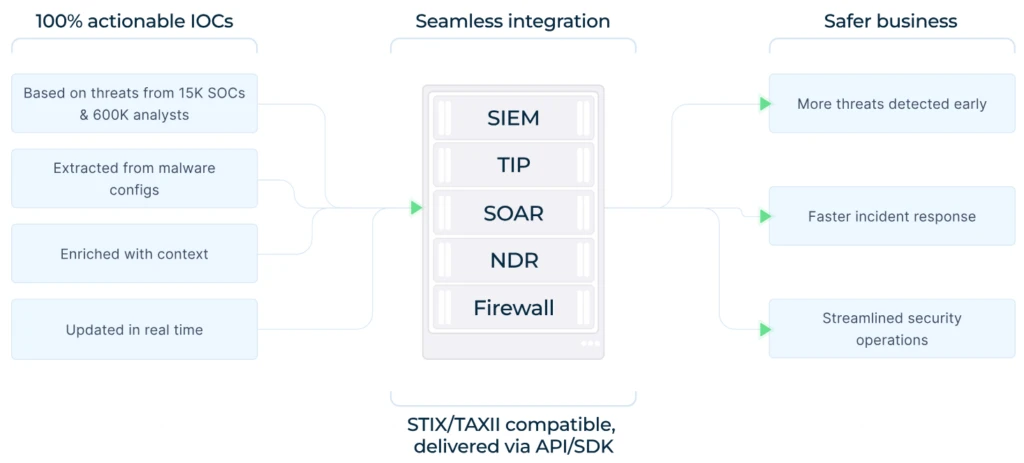

ANY.RUN’s Threat Intelligence Feeds deliver a continuous stream of fresh, verified malicious indicators directly into an organization’s security infrastructure — SIEM, SOAR, TIP, XDR — via STIX/TAXII and API/SDK integrations. These feeds are generated from live sandbox analysis across the ANY.RUN community, meaning they reflect actual attacker behavior observed in real-world campaigns, not synthetic or retrospectively compiled threat data.

A critical differentiator is the uniqueness rate: ANY.RUN reports that 99% of indicators in its feeds are unique to the platform, not duplicated from public threat intel sources. The feeds also dramatically reduce Tier 1 analyst workload by providing malicious-only alerts with full behavioral context, cutting through the alert fatigue that plagues security operations teams dealing with high volumes of false positives from tools that cannot distinguish between legitimate googleapis.com traffic and the specific pattern of googleapis.com traffic used in this campaign.

The Google Storage phishing campaign delivering Remcos RAT is a wake-up call. As attackers continue to abuse trusted cloud services and legitimate binaries, organizations can no longer rely on reputation or signatures alone. Early detection through behavioral analysis and proactive threat intelligence is no longer optional — it is essential for survival.

By leveraging ANY.RUN’s Enterprise Suite, security leaders can stay ahead of these evolving threats, protect critical assets, and maintain business continuity in an increasingly hostile digital landscape. The time to strengthen defenses is now — before the next bid document lands in your inbox.

ANY.RUN, a leading provider of interactive malware analysis and threat intelligence solutions, helps security teams investigate threats faster and with greater clarity across modern enterprise environments.

It allows teams to safely execute suspicious files and URLs, observe real behavior in an Interactive Sandbox, enrich indicators with immediate context through TI Lookup, and monitor emerging malicious infrastructure using Threat Intelligence Feeds. Together, these capabilities help reduce investigation uncertainty, accelerate triage, and limit unnecessary escalations across the SOC.

ANY.RUN is trusted by thousands of organizations worldwide and meets enterprise security and compliance expectations. It is SOC 2 Type II certified, demonstrating its commitment to protecting customer data and maintaining strong security controls.

It hosts the phishing page on legitimate storage.googleapis.com domains instead of suspicious new sites, bypassing URL reputation filters entirely.

Through a layered chain of JS, VBS, PowerShell, and in-memory loading that culminates in process hollowing of the trusted RegSvcs.exe binary.

It is a signed Microsoft .NET binary with a clean VirusTotal reputation, allowing attackers to inject the Remcos payload without triggering file-based alerts.

Full remote access, keylogging, credential theft, file exfiltration, screenshot capture, and persistence — all while running inside legitimate processes.

It detonates suspicious files/URLs in a safe environment, reveals the complete behavioral chain, and provides IOCs and process trees for immediate response.

Enable behavioral analysis tools, integrate real-time threat intelligence feeds, train staff on cloud-storage lures, and test suspicious links in an interactive sandbox before opening.

The post When Trust Becomes a Weapon: Google Cloud Storage Phishing Deploying Remcos RAT appeared first on ANY.RUN’s Cybersecurity Blog.

ANY.RUN’s Cybersecurity Blog – Read More

This post is part of a small blog series covering common Entra ID security findings observed during real-world assessments. Each article explores selected findings in more detail to provide a clearer understanding of the underlying risks and practical implications.

Conditional Access policies are among the most important security controls in Entra ID. As the name suggests, they define under which conditions access is allowed within a tenant. They are used to enforce protections such as MFA, restrict access based on device state or location, and apply stronger controls to sensitive applications or privileged accounts.

At the same time, Conditional Access is a broad and complex topic. The security benefits depend not only on whether policies exist, but also on how well they are designed, scoped, and maintained over time.

A full discussion would easily justify a separate blog post series. Therefore, this post takes a higher-level view and focuses on general weaknesses and commonly occurring issues.

Reviewing existing Conditional Access policies is one of the most important tasks in an Entra ID security assessment. This section highlights common issues that we regularly observe in practice, including coverage gaps and design weaknesses that reduce the intended security benefits.

Each Conditional Access policy must define which identities it applies to. Weaknesses in this area that can leave relevant users insufficiently protected.

A common approach is to scope important Conditional Access policies, for example policies enforcing MFA, to specific groups. In principle, this can work well. In practice, however, we often find that not all relevant users are actually members of the targeted groups. As a result, some users remain outside the scope of the policy and are therefore not protected as intended.

Conditional Access policies often target administrative accounts based on Entra ID roles. This is generally a sensible approach, but in practice we regularly see tenants where privileged roles are in use that are not included in the relevant Conditional Access policies. This can leave administrative accounts less protected than originally intended.

In addition, there is an important Microsoft limitation to be aware of: scoped role assignments are not considered in Conditional Access role targeting1. For example, if a user has the User Administrator role scoped only to an Administrative Unit, that user is not included when a policy targets the role.

Another issue we sometimes observe is that important protection mechanisms are scoped only to specific resources. One example is requiring phishing-resistant MFA only for Microsoft Admin Portals.

The problem is that these resources often do not provide the coverage administrators expect. For example, Microsoft Admin Portals only covers the web-based admin portals, while sensitive actions may also be performed directly on the Microsoft Graph API2.

Conditional Access is sometimes referred to as an identity firewall. However, that comparison can be misleading. Unlike many traditional firewall designs, Conditional Access does not provide a simple default-deny model for all authentication activity. Access is generally allowed unless a policy applies to the sign-in and enforces a control or block.

This makes policy scope highly important. Every additional condition (e.g. network locations, device platform, client apps) in a Conditional Access policy reduces the number of authentication events to which it applies. While conditions are often needed, too many of them can unintentionally create gaps in protection. In practice, we regularly see policies that are narrower than the administrators expect, leaving many authentication events outside the intended protection.

A commonly observed example is a Conditional Access policy that includes both Sign-in risk and User risk as conditions. Because these conditions are combined using a logical AND, both must apply at the same time for the policy to take effect. However, the two conditions often do not overlap for the same sign-in: Sign-in risk is evaluated as part of the authentication process, while User risk is usually calculated separately in the background and may only be raised later through offline detection3. Consequently, such a policy may be triggered only rarely in practice and may therefore provide less protection than expected.

Even if Conditional Access policies target all users and all resources, this does not necessarily mean that every relevant action is covered.

Some actions, such as registering security information or joining a device, must be addressed in a dedicated policy to be covered. In addition, Microsoft has implemented several built-in bypasses and exceptions. These are likely required to avoid breaking important platform functionality. The difficulty is that they are not always clearly documented, which can lead to incorrect assumptions about the actual protection in place. A well-known example is a built-in bypass for policies that require only a compliant device4, which still allows limited actions such as reading device information.

As a result, even broadly scoped Conditional Access policies should not automatically be assumed to cover every relevant action.

Based on our observations, most tenants now enforce MFA through Conditional Access policies. However, during assessments we still regularly find that other important Conditional Access policies are missing.

Examples include:

One policy worth highlighting is the restriction of security information registration, as the impact of this gap is sometimes underestimated.

If no dedicated policy protects the Register security information action, an attacker with the user’s password may still be able to register a new MFA factor during sign-in, provided the user has not yet registered any MFA methods. That factor can then be used to satisfy the MFA requirement.

The example section later in this post illustrates this issue with a practical scenario.

We regularly see specific IP ranges excluded from important Conditional Access policies, such as MFA requirements. In many cases, these exclusions were introduced to reduce MFA prompts for users. However, they are often unnecessary, particularly in environments using Hybrid Joined or Entra Joined devices, where the Primary Refresh Token (PRT) mechanism already reduces repeated MFA prompts5.

In some environments, the excluded ranges are also relatively large, for example /24 or /23 networks. Especially in smaller organizations, these ranges often include not only the public IP addresses used by employees, but also other infrastructure segments such as guest Wi-Fi or DMZ networks. As a result, they can unnecessarily weaken the intended protection and increase the risk of abuse.

The following attack examples illustrate some of the issues described earlier using practical scenarios. In some cases, the security impact is not immediately obvious, so practical examples help clarify their relevance.

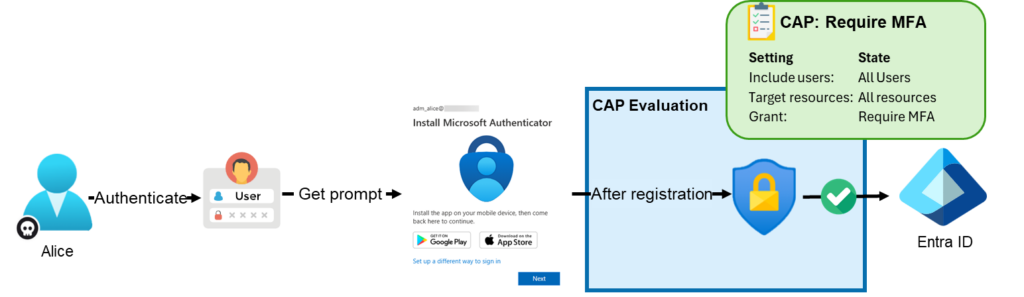

A Conditional Access policy targeting the user action Register security information can restrict under which conditions users are allowed to register MFA methods or use self-service password reset (SSPR)6.

A common assumption is that the registration of MFA methods only becomes relevant after an attacker has already fully compromised an account, for example through man-in-the-middle (MitM) phishing or token theft. In practice, however, there is another issue that is sometimes overlooked: if a user has no MFA methods registered yet and no policy protects Register security information, the account may effectively only be protected by its password.

As a result, if an attacker is able to compromise the password, for example through password spraying, it may still be possible to register a new MFA factor before the general MFA Conditional Access policy is enforced. The attacker can then use this newly registered factor to satisfy the MFA requirement in later sign-ins.

For this reason, this policy is particularly important for protecting newly created accounts and other accounts that do not yet have any MFA methods registered.

For example, assume the tenant only has a policy enforcing MFA for all users. Alice’s account was created recently and does not yet have any MFA methods registered. Therefore, an attacker who compromises Alice’s password may still be able to satisfy the MFA requirement by first registering their own MFA factor.

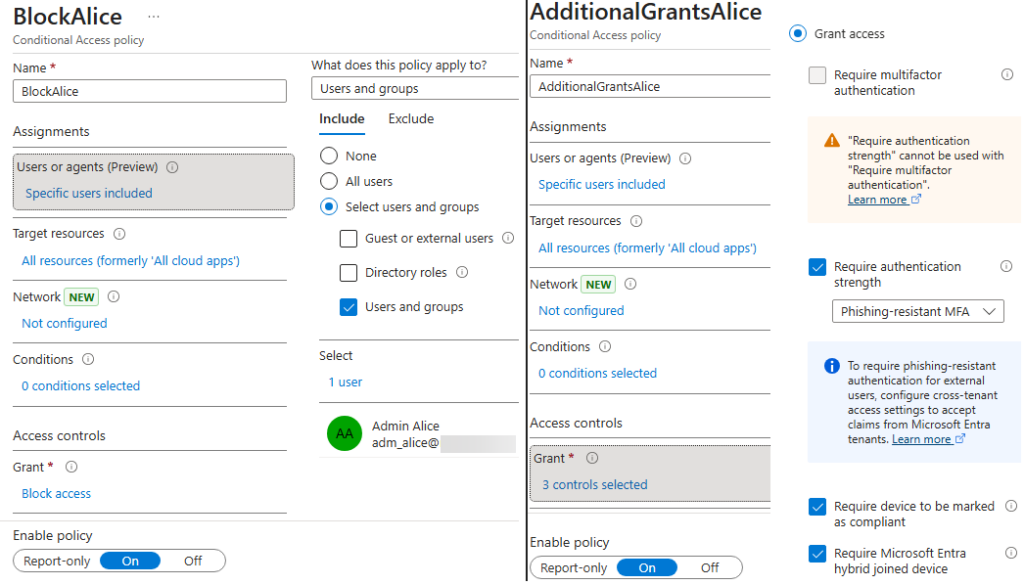

This is even possible if additional Conditional Access requirements are configured, such as requiring a compliant device, requiring a joined device, or applying a policy that would otherwise block Alice’s access completely. The following Conditional Access policy configuration illustrates such a scenario:

In the normal portal sign-in flow, the MFA registration wizard may no longer appear. Instead, the following error is shown:

However, under certain conditions, it may still be possible to use applications with known Conditional Access bypasses7, for example by using tools such as EntraTokenAid, and trigger an MFA flow that allows a new MFA factor to be registered:

PS> invoke-Auth -ClientID 1950a258-227b-4e31-a9cf-717495945fc2 -RedirectURL http://localhost:13824 -api 26a4ae64-5862-427f-a9b0-044e62572a4f -ForceNgcMfa -DisableCAE

[*] Local redirect URL used. Starting local HTTP Server..

[+] HTTP server running on http://localhost:13824/

[i] Listening for OAuth callback for 180 s (HttpTimeout value)In the browser window opened as part of this flow, it is again possible to register a new MFA factor:

Note: In the second scenario, the attacker may only be able to register an additional MFA factor, but not directly access any resources. Further access would still depend on the presence of a weakness or bypass in the existing Conditional Access policies.

The key point is that even restrictive Conditional Access policies targeting all resources do not necessarily prevent every action. Some actions may still remain possible and can support further attack steps. It is therefore important to also protect specific user actions through dedicated Conditional Access policies.

Apart from implementing a dedicated Conditional Access policy for security information registration, additional measures are also important:

We regularly observe Conditional Access policies that do not target all resources, but only very specific ones. A common real-world example is applying additional protections, such as specific IP restrictions, device requirements, or phishing-resistant MFA, only to the resource Microsoft Admin Portals with the intention of preventing attackers from performing administrative actions.

The problem with these built-in resource selections is that their actual coverage is not always immediately clear. For example, the Microsoft Admin Portals resource protects the web-based administrative portals, but not other interfaces such as direct access to the Microsoft Graph API through a different OAuth client.

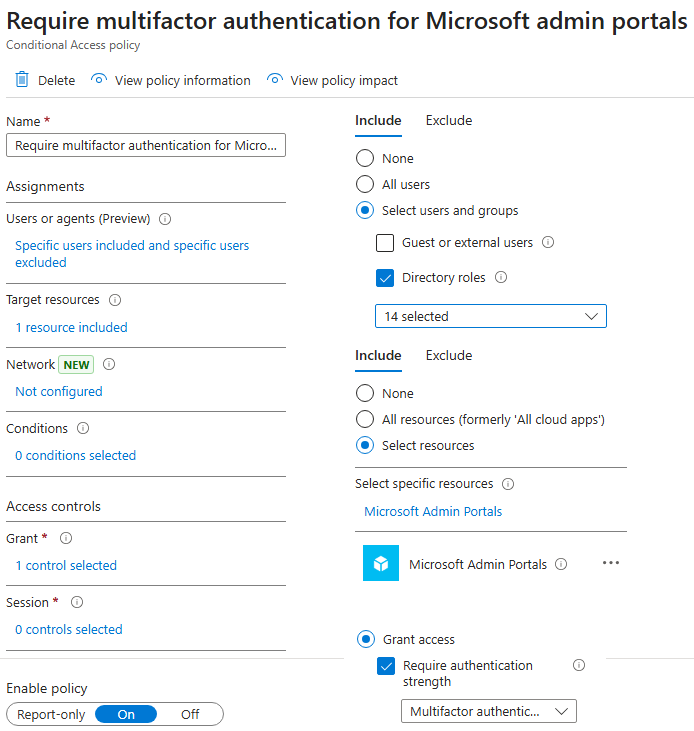

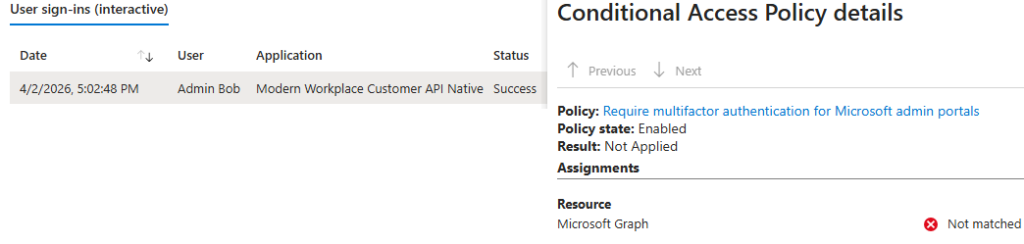

As an example, we use the official Microsoft policy template Require multifactor authentication for Microsoft admin portals. This Conditional Access policy targets Global Administrator and other privileged roles, applies to the resource Microsoft Admin Portals, and requires MFA:

However, if the Global Administrator Bob requests a token for the Microsoft Graph API using the client ID of the first-party application Modern Workplace Customer API Native (2e307cd5-5d2d-4499-b656-a97de9f52708), the policy is not applied. As a result, he can even use the resource owner password credentials flow8 directly from the console:

PS> $tokens = Invoke-ROPC -ClientID 2e307cd5-5d2d-4499-b656-a97de9f52708 -Username adm_bob@notasecuretenant.test -Password "[CUT-BY-COMPASS]" -Api graph.microsoft.com

[*] Starting ROPC flow: API graph.microsoft.com / Client id: 2e307cd5-5d2d-4499-b656-a97de9f52708 / User: adm_bob@notasecuretenant.test

[+] Got an access token and a refresh token

[i] Audience: https://graph.microsoft.com / Expires at: 04/03/2026 17:02:46

PS> $tokens

token_type : Bearer

expires_in : 86399

ext_expires_in : 86399

access_token : eyJ0eXAiOiJKV1QiL[CUT-BY-COMPASS]

Expiration_time : 03.04.2026 17:02:46

scp : Application.Read.All AuditLog.Read.All DeviceManagementApps.ReadWrite.All DeviceManagementConfiguration.ReadWrite.All DeviceManagementManagedDevices.PrivilegedOperations.All DeviceManagementManagedDevices.ReadWrite.All DeviceManagementRBAC.ReadWrite.All DeviceManagementServiceConfig.ReadWrite.All Directory.AccessAsUser.All email Group.ReadWrite.All Mail.Send openid Policy.Read.All Policy.ReadWrite.ConditionalAccess profile Reports.Read.All

User.ReadWrite.All WindowsUpdates.ReadWrite.All

tenant : 9f412d6a-xxxx-xxxx-xxxx-32e31a6af459

user : adm_bob@notasecuretenant.test

client_app : Modern Workplace Customer API Native

client_app_id : 2e307cd5-5d2d-4499-b656-a97de9f52708

auth_methods : {pwd}

ip : [CUT-BY-COMPASS]

audience : https://graph.microsoft.com

api : graph.microsoft.com

xms_cc : {CP1}In the corresponding sign-in log, it can be seen that the Conditional Access policy enforcing MFA was not applied:

As a result, Bob can perform administrative tasks directly through the Microsoft Graph API instead of through the portal. For example, he can disable a Conditional Access policy:

PS> Connect-MgGraph -AccessToken ($Tokens.access_token | ConvertTo-SecureString -AsPlainText -Force)

Welcome to Microsoft Graph!

Connected via userprovidedaccesstoken access using 2e307cd5-5d2d-4499-b656-a97de9f52708

PS> Get-MgIdentityConditionalAccessPolicy | ft Displayname,ID,State

DisplayName Id State

----------- -- -----

EnforceMFA edafc746-76e3-4a04-9a0d-e9ccb1e5939c enabled

PS> Update-MgIdentityConditionalAccessPolicy -ConditionalAccessPolicyId "edafc746-76e3-4a04-9a0d-e9ccb1e5939c" -BodyParameter @{state = "disabled"}

PS> Get-MgIdentityConditionalAccessPolicy -ConditionalAccessPolicyId "edafc746-76e3-4a04-9a0d-e9ccb1e5939c" | ft Displayname,ID,State

DisplayName Id State

----------- -- -----

EnforceMFA edafc746-76e3-4a04-9a0d-e9ccb1e5939c disabledThere are also built-in bypasses that are not clearly documented by Microsoft. For example, Dirk-jan Mollema discovered in 2023 that the OAuth client Microsoft Intune Company Portal (client ID: 9ba1a5c7-f17a-4de9-a1f1-6178c8d51223) could be used to bypass the compliant device requirement of Conditional Access policies.

This behavior may be necessary to support the enrollment of devices that are not yet compliant. The more serious issue was that this first-party application historically had dangerous pre-consented delegated permissions across multiple APIs, which could allow full tenant compromise if the user had sufficient privileges.

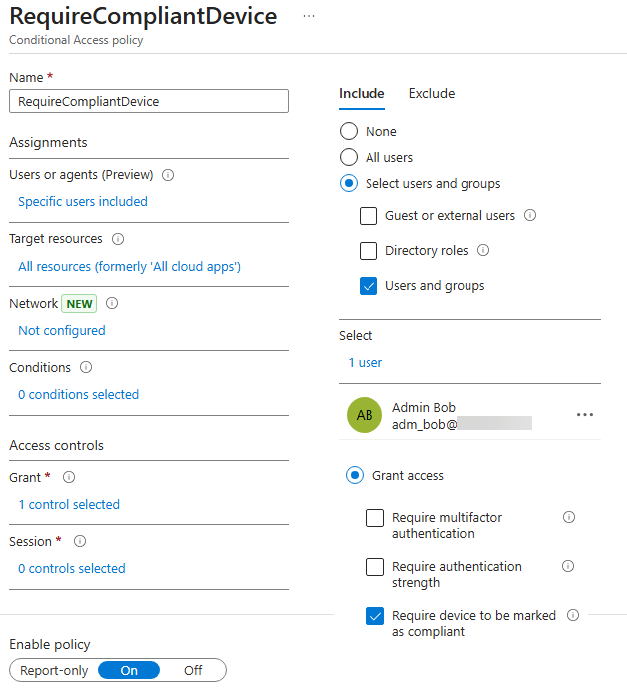

These pre-consented permissions have since been reduced. However, it is still possible, for example, to read all devices through the Microsoft Graph API. Assume there is a policy named RequireCompliantDevice that directly includes Bob, targets all resources, and requires a compliant device:

However, by using the client ID of the Microsoft Intune Company Portal and a valid redirect URI for this application, it is possible to obtain a valid access token for the Microsoft Graph API with certain pre-consented scopes, such as Device.Read.All, without having a compliant device:

PS> $tokens = Invoke-Auth -ClientID '9ba1a5c7-f17a-4de9-a1f1-6178c8d51223' -RedirectUrl 'urn:ietf:wg:oauth:2.0:oob'

[*] External redirect URL used

[*] Spawning embedded Browser

[+] Got an AuthCode

[*] Calling the token endpoint

[+] Got an access token and a refresh token

[i] Audience: https://graph.microsoft.com / Expires at: 04/03/2026 22:21:56

PS> $tokens

token_type : Bearer

scope : email openid profile https://graph.microsoft.com/Device.Read.All [CUT-BY-COMPASS]

expires_in : 86399

ext_expires_in : 86399

refresh_in : 43199

access_token : eyJ0eXAiOiJKV1QiLCJ[CUT-BY-COMPASS]

refresh_token : 1.Aa4Aai1Bn2Cu[CUT-BY-COMPASS]

foci : 1

Expiration_time : 03.04.2026 22:21:56

scp : Device.Read.All email openid profile ServicePrincipalEndpoint.Read.All User.Read

tenant : 9f412d6a-xxxx-xxxx-xxxx-32e31a6af459

user : adm_bob@notasecuretenant.test

client_app : Microsoft Intune Company Portal

client_app_id : 9ba1a5c7-f17a-4de9-a1f1-6178c8d51223

auth_methods : {pwd, mfa}

ip : 5[CUT-BY-COMPASS]

audience : https://graph.microsoft.com

api : graph.microsoft.com

xms_cc : {CP1}The token can then be used to read information about all devices in the tenant:

PS> Connect-MgGraph -AccessToken ($Tokens.access_token | ConvertTo-SecureString -AsPlainText -Force)

Welcome to Microsoft Graph!

PS> Get-MgDevice | fl DisplayName,OperatingSystem,OperatingSystemVersion,ApproximateLastSignInDateTime,IsCompliant

DisplayName : DESKTOP-OKSUS9V

OperatingSystem : Windows

OperatingSystemVersion : 10.0.19045.5011

ApproximateLastSignInDateTime : 09.09.2025 06:34:00

IsCompliant : False

DisplayName : WSPRZH34

OperatingSystem : Windows

OperatingSystemVersion : 10.0.19045.3808

ApproximateLastSignInDateTime : 09.12.2025 19:59:20

IsCompliant : True

[CUT-BY-COMPASS]Important: Other enforced grant controls, such as MFA or IP restrictions, still apply. This example is intended to illustrate that built-in bypasses exist for specific conditions and can easily be overlooked, particularly where they are not clearly documented by Microsoft. Several such bypasses were identified and documented by Fabian Bader in 20259.

For this reason, it is generally advisable not to rely on a single condition such as device compliance alone. Instead, important protections should combine multiple strong conditions using a logical AND, for example MFA and a compliant device.

EntraFalcon is a PowerShell-based assessment and enumeration tool designed to evaluate Microsoft Entra ID environments and identify privileged objects and risky configurations. The tool is open source, available free of charge, and exports results as local interactive HTML reports for offline analysis. Installation instructions and usage details are available on GitHub:

https://github.com/CompassSecurity/EntraFalcon

For Conditional Access, EntraFalcon enumerates all policies in the tenant and provides an overview of their most important settings. This makes it easier to review active policies and their intended purpose without having to click through them one by one in the portal:

The detailed policy view shows relevant policy details such as user coverage, effective inclusions and exclusions, detected warnings, and the configured conditions and grant controls:

The Security Findings Report also evaluates whether important policy types are present, for example for enforcing MFA, blocking the device code flow, blocking legacy authentication, or restricting the registration of security information. It evaluates whether they appear to be configured as expected. This includes checks for issues such as not targeting all applications, excluding too many users, adding unnecessary conditions, or not covering privileged roles that are actually in use. If no suitable policy without detected issues is found, the corresponding security finding is shown:

In the latest version, it is also possible to generate a CSV list of users who are not covered by a specific policy by using the -ExportCapUncoveredUsers switch when running EntraFalcon. This can help identify users who fall outside the scope of an important policy:

Disclaimer: A full technical analysis of Conditional Access remains difficult, especially where multiple policies target different identities to enforce the same control. EntraFalcon therefore uses different confidence levels. Findings are marked as Sure where an important control is clearly missing entirely, for example blocking the device code flow or legacy authentication. Findings related to potentially weak configurations are marked as Require Verification. In addition, the coverage statistics in the current version do not fully enumerate all external user inclusion and exclusion types (only normal B2B guests are covered).

Appropriate recommendations depend heavily on the organization, its internal structure, and the services in use. The following points should therefore be understood as general guidance and adapted to the specific environment.

For basic and important protection policies, such as enforcing MFA, it is generally advisable to keep the design simple and the scope broad:

At a minimum, tenants should generally consider policies for the following:

Other recommendations:

︎

︎ ︎

︎ ︎

︎ ︎

︎ ︎

︎ ︎

︎ ︎

︎ ︎

︎ ︎

︎Compass Security Blog – Read More