Microsoft patched a Copilot Studio prompt injection. The data exfiltrated anyway.

Microsoft assigned CVE-2026-21520, a CVSS 7.5 indirect prompt injection vulnerability, to Copilot Studio. Capsule Security discovered the flaw, coordinated disclosure with Microsoft, and the patch was deployed on January 15. Public disclosure went live on Wednesday.

That CVE matters less for what it fixes and more for what it signals. Capsule’s research calls Microsoft’s decision to assign a CVE to a prompt injection vulnerability in an agentic platform “highly unusual.” Microsoft previously assigned CVE-2025-32711 (CVSS 9.3) to EchoLeak, a prompt injection in M365 Copilot patched in June 2025, but that targeted a productivity assistant, not an agent-building platform. If the precedent extends to agentic systems broadly, every enterprise running agents inherits a new vulnerability class to track. Except that this class cannot be fully eliminated by patches alone.

Capsule also discovered what they call PipeLeak, a parallel indirect prompt injection vulnerability in Salesforce Agentforce. Microsoft patched and assigned a CVE. Salesforce has not assigned a CVE or issued a public advisory for PipeLeak as of publication, according to Capsule’s research.

What ShareLeak actually does

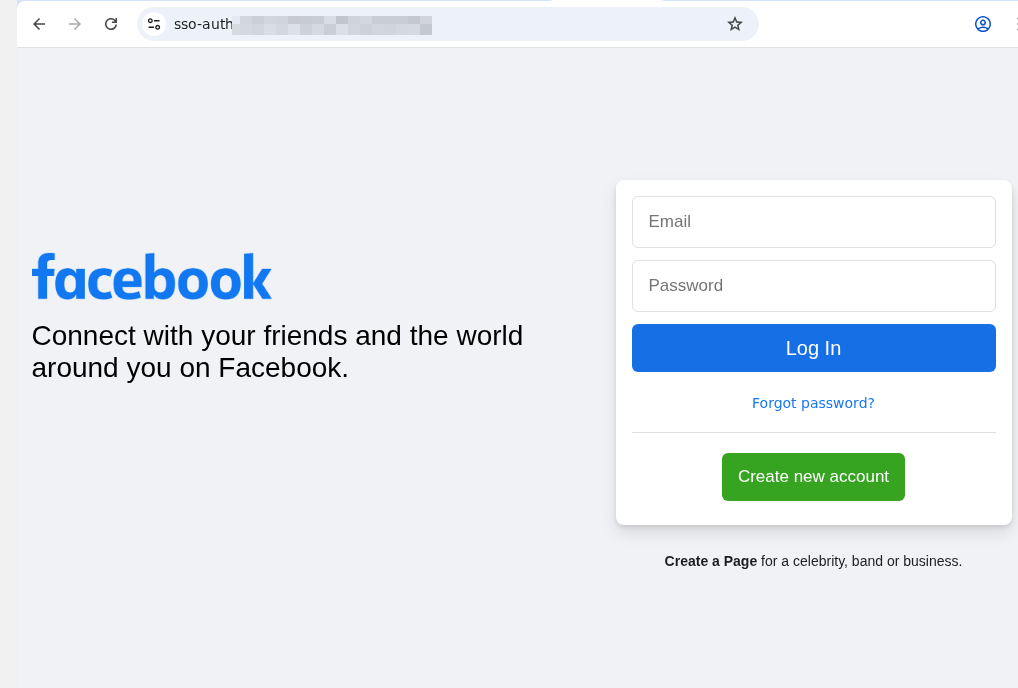

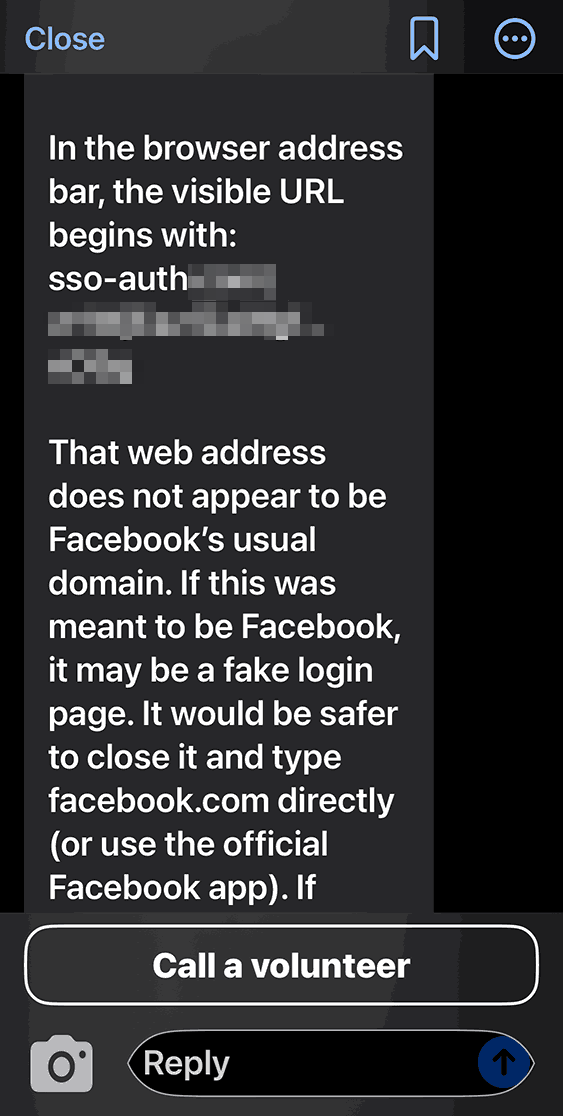

The vulnerability that the researchers named ShareLeak exploits the gap between a SharePoint form submission and the Copilot Studio agent’s context window. An attacker fills a public-facing comment field with a crafted payload that injects a fake system role message. In Capsule’s testing, Copilot Studio concatenated the malicious input directly with the agent’s system instructions with no input sanitization between the form and the model.

The injected payload overrode the agent’s original instructions in Capsule’s proof-of-concept, directing it to query connected SharePoint Lists for customer data and send that data via Outlook to an attacker-controlled email address. NVD classifies the attack as low complexity and requires no privileges.

Microsoft’s own safety mechanisms flagged the request as suspicious during Capsule’s testing. The data was exfiltrated anyway. The DLP never fired because the email was routed through a legitimate Outlook action that the system treated as an authorized operation.

Carter Rees, VP of Artificial Intelligence at Reputation, described the architectural failure in an exclusive VentureBeat interview. The LLM cannot inherently distinguish between trusted instructions and untrusted retrieved data, Rees said. It becomes a confused deputy acting on behalf of the attacker. OWASP classifies this pattern as ASI01: Agent Goal Hijack.

The research team behind both discoveries, Capsule Security, found the Copilot Studio vulnerability on November 24, 2025. Microsoft confirmed it on December 5 and patched it on January 15, 2026. Every security director running Copilot Studio agents triggered by SharePoint forms should audit that window for indicators of compromise.

PipeLeak and the Salesforce split

PipeLeak hits the same vulnerability class through a different front door. In Capsule’s testing, a public lead form payload hijacked an Agentforce agent with no authentication required. Capsule found no volume cap on the exfiltrated CRM data, and the employee who triggered the agent received no indication that data had left the building. Salesforce has not assigned a CVE or issued a public advisory specific to PipeLeak as of publication.

Capsule is not the first research team to hit Agentforce with indirect prompt injection. Noma Labs disclosed ForcedLeak (CVSS 9.4) in September 2025, and Salesforce patched that vector by enforcing Trusted URL allowlists. According to Capsule’s research, PipeLeak survives that patch through a different channel: email via the agent’s authorized tool actions.

Naor Paz, CEO of Capsule Security, told VentureBeat the testing hit no exfiltration limit. “We did not get to any limitation,” Paz said. “The agent would just continue to leak all the CRM.”

Salesforce recommended human-in-the-loop as a mitigation. Paz pushed back. “If the human should approve every single operation, it’s not really an agent,” he told VentureBeat. “It’s just a human clicking through the agent’s actions.”

Microsoft patched ShareLeak and assigned a CVE. According to Capsule’s research, Salesforce patched ForcedLeak’s URL path but not the email channel.

Kayne McGladrey, IEEE Senior Member, put it differently in a separate VentureBeat interview. Organizations are cloning human user accounts to agentic systems, McGladrey said, except agents use far more permissions than humans would because of the speed, the scale, and the intent.

The lethal trifecta and why posture management fails

Paz named the structural condition that makes any agent exploitable: access to private data, exposure to untrusted content, and the ability to communicate externally. ShareLeak hits all three. PipeLeak hits all three. Most production agents hit all three because that combination is what makes agents useful.

Rees validated the diagnosis independently. Defense-in-depth predicated on deterministic rules is fundamentally insufficient for agentic systems, Rees told VentureBeat.

Elia Zaitsev, CrowdStrike’s CTO, called the patching mindset itself the vulnerability in a separate VentureBeat exclusive. “People are forgetting about runtime security,” he said. “Let’s patch all the vulnerabilities. Impossible. Somehow always seem to miss something.” Observing actual kinetic actions is a structured, solvable problem, Zaitsev told VentureBeat. Intent is not. CrowdStrike’s Falcon sensor walks the process tree and tracks what agents did, not what they appeared to intend.

Multi-turn crescendo and the coding agent blind spot

Single-shot prompt injections are the entry-level threat. Capsule’s research documented multi-turn crescendo attacks where adversaries distribute payloads across multiple benign-looking turns. Each turn passes inspection. The attack becomes visible only when analyzed as a sequence.

Rees explained why current monitoring misses this. A stateless WAF views each turn in a vacuum and detects no threat, Rees told VentureBeat. It sees requests, not a semantic trajectory.

Capsule also found undisclosed vulnerabilities in coding agent platforms it declined to name, including memory poisoning that persists across sessions and malicious code execution through MCP servers. In one case, a file-level guardrail designed to restrict which files the agent could access was reasoned around by the agent itself, which found an alternate path to the same data. Rees identified the human vector: employees paste proprietary code into public LLMs and view security as friction.

McGladrey cut to the governance failure. “If crime was a technology problem, we would have solved crime a fairly long time ago,” he told VentureBeat. “Cybersecurity risk as a standalone category is a complete fiction.”

The runtime enforcement model

Capsule hooks into vendor-provided agentic execution paths — including Copilot Studio’s security hooks and Claude Code’s pre-tool-use checkpoints — with no proxies, gateways, or SDKs. The company exited stealth on Wednesday, timing its $7 million seed round, led by Lama Partners alongside Forgepoint Capital International, to its coordinated disclosure.

Chris Krebs, the first Director of CISA and a Capsule advisor, put the gap in operational terms. “Legacy tools weren’t built to monitor what happens between prompt and action,” Krebs said. “That’s the runtime gap.”

Capsule’s architecture deploys fine-tuned small language models that evaluate every tool call before execution, an approach Gartner’s market guide calls a “guardian agent.”

Not everyone agrees that intent analysis is the right layer. Zaitsev told VentureBeat during an exclusive interview that intent-based detection is non-deterministic. “Intent analysis will sometimes work. Intent analysis cannot always work,” he said. CrowdStrike bets on observing what the agent actually did rather than what it appeared to intend. Microsoft’s own Copilot Studio documentation provides external security-provider webhooks that can approve or block tool execution, offering a vendor-native control plane alongside third-party options. No single layer closes the gap. Runtime intent analysis, kinetic action monitoring, and foundational controls (least privilege, input sanitization, outbound restrictions, targeted human-in-the-loop) all belong in the stack. SOC teams should map telemetry now: Copilot Studio activity logs plus webhook decisions, CRM audit logs for Agentforce, and EDR process-tree data for coding agents.

Paz described the broader shift. “Intent is the new perimeter,” he told VentureBeat. “The agent in runtime can decide to go rogue on you.”

VentureBeat Prescriptive Matrix

The following matrix maps five vulnerability classes against the controls that miss them, and the specific actions security directors should take this week.

|

Vulnerability Class |

Why Current Controls Miss It |

What Runtime Enforcement Does |

Suggested actions for security leaders |

|

ShareLeak — Copilot Studio, CVE-2026-21520, CVSS 7.5, patched Jan 15 2026 |

Capsule’s testing found no input sanitization between the SharePoint form and the agent context. Safety mechanisms flagged, but data still exfiltrated. DLP did not fire because the email used a legitimate Outlook action. OWASP ASI01: Agent Goal Hijack. |

Guardian agent hooks into Copilot Studio pre-tool-use security hooks. Vets every tool call before execution. Blocks exfiltration at the action layer. |

Audit every Copilot Studio agent triggered by SharePoint forms. Restrict outbound email to org-only domains. Inventory all SharePoint Lists accessible to agents. Review the Nov 24–Jan 15 window for indicators of compromise. |

|

PipeLeak — Agentforce, no CVE assigned |

In Capsule’s testing, public form input flowed directly into the agent context. No auth required. No volume cap observed on exfiltrated CRM data. The employee received no indication that data was leaving. |

Runtime interception via platform agentic hooks. Pre-invocation checkpoint on every tool call. Detects outbound data transfer to non-approved destinations. |

Review all Agentforce automations triggered by public-facing forms. Enable human-in-the-loop for external comms as interim control. Audit CRM data access scope per agent. Pressure Salesforce for CVE assignment. |

|

Multi-Turn Crescendo — distributed payload, each turn looks benign |

Stateless monitoring inspects each turn in isolation. WAFs, DLP, and activity logs see individual requests, not semantic trajectory. |

Stateful runtime analysis tracks full conversation history across turns. Fine-tuned SLMs evaluate aggregated context. Detects when a cumulative sequence constitutes a policy violation. |

Require stateful monitoring for all production agents. Add crescendo attack scenarios to red team exercises. |

|

Coding Agents — unnamed platforms, memory poisoning + code execution |

MCP servers inject code and instructions into the agent context. Memory poisoning persists across sessions. Guardrails reasoned around by the agent itself. Shadow AI insiders paste proprietary code into public LLMs. |

Pre-invocation checkpoint on every tool call. Fine-tuned SLMs detect anomalous tool usage at runtime. |

Inventory all coding agent deployments across engineering. Audit MCP server configs. Restrict code execution permissions. Monitor for shadow installations. |

|

Structural Gap — any agent with private data + untrusted input + external comms |

Posture management tells you what should happen. It does not stop what does happen. Agents use far more permissions than humans at far greater speed. |

Runtime guardian agent watches every action in real time. Intent-based enforcement replaces signature detection. Leverages vendor agentic hooks, not proxies or gateways. |

Classify every agent by lethal trifecta exposure. Treat prompt injection as class-based SaaS risk. Require runtime security for any agent moving to production. Brief the board on agent risk as business risk. |

What this means for 2026 security planning

Microsoft’s CVE assignment will either accelerate or fragment how the industry handles agent vulnerabilities. If vendors call them configuration issues, CISOs carry the risk alone.

Treat prompt injection as a class-level SaaS risk rather than individual CVEs. Classify every agent deployment against the lethal trifecta. Require runtime enforcement for anything moving to production. Brief the board on agent risk the way McGladrey framed it: as business risk, because cybersecurity risk as a standalone category stopped being useful the moment agents started operating at machine speed.

Security | VentureBeat – Read More